Cerebellum: Design of a Programmable Smart-Peripheral for the Ariane Core

From iis-projects

Introduction

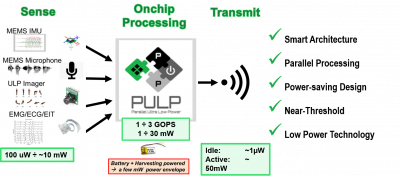

With the growth of smart sensors being part of everyone’s everyday life, data driven applications are acquiring more and more relevance in the electronics consumer market. Smartwatches for fitness tracking, camera for security and multimedia entertaining as well as biomedical devices as ECG and EEG wearable devices for health care applications are just few of these examples. Typically, the data streams coming from sensors are processed on servers in the cloud. This requires the data to be sensed by a physical device driven by a microcontroller, possibly pre-processed and eventually sent to the network wirelessly (as using Bluetooth low power WiFi radios) where the packet goes through router and switches until it finally arrives to the server in the cloud which will process it and possibly give feedbacks to the users or to the microcontroller for closed-loop applications. As these smart-sensors are usually battery-powered, they are designed to be energy efficient. Most of the power is spent in transmitting the data from the radio to the server, therefore minimizing the transmitted bandwidth towards the servers does not only help to minimize the traffic and congestions, but it also helps the smart-sensors to live longer.

Classification and/or data compression are data processing algorithms that can be used to cope with the aforementioned challenge. As for example, one can imagine an application for face recognition built as following: an ultra-low-power camera continuously acquires images, the microcontroller can compress the image and send less bytes to the server which will simply decompress the data to perform a convolutional neural network to classify the acquired face. Another smarter example still built on a face recognition application is the following: the microcontroller performs a pre-classification on the image to recognize whether the picture is a face or not. In this case, only a small part of the algorithm is needed with respect the whole face recognition process. If the picture is a face, the image is then sent to the cloud saving both on-node power due to the limited access to the radio device and server resources, as they now execute face recognitions algorithms only on certain events.

The event-driven execution paradigma can be also applied at microscopic level by shutting down parts of the microcontroller which are not used during some sort of pre-processing and turning them up only for detected events.

Project description

Do you want to leave your contribution to the open-source community?

In this thesis we propose to build the next OPEN-SOURCE RISC-V programmable smart-peripheral system for the Ariane Core. The event-based microcontroller based on our open-source IPs such as the RISC-V RV64GC Ariane core and the PULPissimo platform will then be released open-source together with his older brothers PULP, PULPissimo, PULPino, Ariane, BigPULP, etc.

https://github.com/pulp-platform/ariane

https://github.com/pulp-platform/pulpissimo

As many applications are built using high-level languages such as Python or LUA, having a Linux-capable microcontroller as for example the Raspberry-Pi or the Ariane core makes the software portability and reusability easy.

However, these microcontrollers usually run at high speed (above 1GHz) and consume non-negligible power for constrained applications. We therefore propose to connect the PULPissimo microcontroller based on our RISC-V RV32IMFC RISCY core to the Ariane coreplex for the heavy-processing and acquisition part of the application.

PULPissimo has an autonomous and efficient I/O subsystem, a rich set of peripherals and is optimized for energy efficiency. The RI5CY core has been extended with custom instructions to target high energy efficiency when running digital signal processing functions. It can be attached to the Ariane subsystem via an AXI plug and mapped to the Linux physical memory-mapped device as a normal peripheral.

The student tasks can be summarized as following:

- Physically connect the PULPissimo microcontroller to the Ariane coreplex and map the whole system to the FPGA. Note that Ariane has already been mapped to the FPGA and it is able to boot Linux, the student can start for the already done work and extend it with PULPissimo.

- Write the kernel driver for Linux to map a physical region of memory to control PULPissimo, which will be seen by the OS as a smart peripherals programmed by writing special words on special addresses.

- Write or adapt common benchmarks like face detection on the whole system under the Linux environment.

The point 3 will be implemented by exploiting the event-based paradigma as follow: The application running on Linux on the Ariane core will call a function like “acquire_and_detect” by using the PULPissimo smart peripheral. Thus the RISCY core will use the I/O subsystem to acquire the picture and run a face detection algorithm on it. If the image is indeed a face, the function will return true otherwise false. Meanwhile Ariane waits in sleep mode (saving power) for PULPissimo to accomplish the task, then if the image acquire represents indeed a face it will run, using advanced libraries liek TensorFlow lite [1], the whole face recognition task.

[1] https://www.tensorflow.org/lite/

Required Skills

To work on this project, you will need:

- to have worked in the past with at least one RTL language (SystemVerilog or Verilog or VHDL) - having followed the VLSI1 / VLSI2 courses is recommended

- to have prior knowedge of hardware design and computer architecture and FPGA physical design

Other skills that you might find useful include:

- familiarity with a scripting language for numerical simulation (Python or Matlab or Lua…)

- to be strongly motivated for a difficult but super-cool project

If you want to work on this project, but you think that you do not match some the required skills, we can give you some preliminary exercise to help you fill in the gap.

Status: Available

- Supervision: Pasquale Davide Schiavone