Single-Bit-Synapse Spiking Neural System-on-Chip

From iis-projects

Contents

Introduction

Current interest in brain-inspired and neuromorphic computer architectures is enormous, due to its conceptual attractiveness and its potential applications to sensor networks, robotics, low-power vision and other fields. An interesting approach is that followed by the IBM TrueNorth architecture [Merolla14], a homogeneous fabric of 1 million digital spiking neurons that can be used for visual classification at a very low power budget (65 mW). In collaboration with KTH Stockholm, we at IIS have developed a spiking neuron similar to that used in TrueNorth. The objective of this project is to customize and use this neuron to create a scalable spiking neuron for FPGA and ASIC targets. Whereas that work marked a starting point for the development of a novel spiking-based architecture, in this project we would like to develop a concrete proof of concept low power System-on-Chip where (small) practical applications such as Spiking Convolutional Neural Networks can be mapped.

Project description

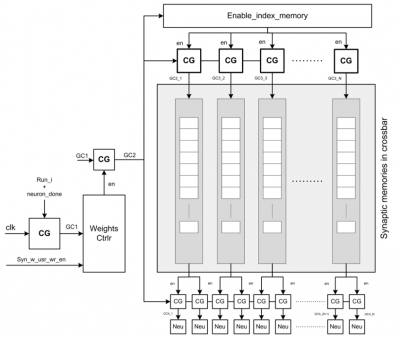

Our current neuron core is based on a simple neuron-model (leaky integrate and fire or LIF) that is vastly considered the best tradeoff point for digital implementation, combining simplicity and computing power. A neuron core essentially consists of a parametric number of LIF neurons and a synaptic crossbar (i.e., a memory array) that defines their connectivity, which is typically high to model neural structures with very high fan-in.

The great majority of the area and power spent in a neuron core are due to the synaptic array, therefore a first primary objective for this work is the optimization of this structure, reducing the number of bits dedicated to the synaptic weights, introducing some connectivity constraints (e.g. only a certain percentage of the connections can be simultaneously enabled), and using compression schemes. A possible interesting approach, inspired from convolutional XNOR-nets [Rastegari16], is to reduce synaptic weights to one or two bits (connected/unconnected, excitatory/inhibitory).

To develop a full, scalable and functional design where various SNNs can be deployed, several neuron cores must be connected. With regard to this, a higher-level synaptic array for the full System-on-Chip must be designed, taking into account even more strict area constraints and also the necessity for scalability. Finally, the neural system should be able to communicate with the external world in terms of a) spikes using the AER interface and b) standard digital interfaces and/or events to communicate with conventional computing systems. The neuron core has therefore to be augmented with such systems before it can be considered complete. IPs from the PULP project may be used for this, as they partially overlap the scope of this project.

Outcomes and Acquired Expertise

With this project you will work in a field of active exciting research to develop a state-of-art neuromorphic accelerator for MPSoC and FPGA targets. You will learn:

- how to design a hardware module and integrate it within a more complex platform, using EDA tools for verification and RTL synthesis to evaluate results;

- how spiking neural networks models work and how they differ from other neural models

Required Skills

To work on this project, you will need:

- to have worked in the past with at least one RTL language (SystemVerilog or Verilog or VHDL) - having followed the VLSI1 / VLSI2 courses is recommended

- to have prior knowedge of hardware design and computer architecture - having followed the Advances System-on-Chip Design course is recommended

Other skills that you might find useful include:

- familiarity with a scripting language for numerical simulation (Python or Matlab or Lua…)

- to be strongly motivated for a difficult but super-cool project

If you want to work on this project, but you think that you do not match some the required skills, we can give you some preliminary exercise to help you fill in the gap.

Status: Available

- Supervision: Francesco Conti

Professor

Practical Details

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues.

Around the middle of the project there is a design review, where senior members of the lab review your work (bring all the relevant information, such as prelim. specifications, block diagrams, synthesis reports, testing strategy, ...) to make sure everything is on track and decide whether further support is necessary. They also make the definite decision on whether the chip is actually manufactured (no reason to worry, if the project is on track) and whether more chip area, a different package, ... is provided. For more details confer to [1].

At the end of the project, you have to present/defend your work during a 15 min. presentation and 5 min. of discussion as part of the IIS colloquium.

Literature

- [Merolla14] P. A. Merolla et al., “A million spiking-neuron integrated circuit with a scalable communication network and interface,” Science, vol. 345, no. 6197, pp. 668–673, Aug. 2014.

- [Rastegari16] M. Rastegari et al., “XNOR-Net: ImageNet Classification Using Binary Convolutional Neural Networks,” https://arxiv.org/pdf/1603.05279v4

Links

- The EDA wiki with lots of information on the ETHZ ASIC design flow (internal only) [2]

- The IIS/DZ coding guidelines [3]