A Recurrent Neural Network Speech Recognition Chip

From iis-projects

Contents

Short Description

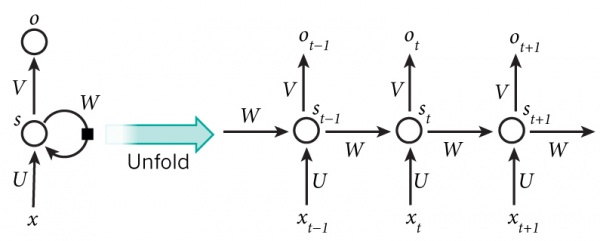

Deep Recurrent Neural Networks have recently received a lot of attention as they are able to process highly time-correlated data such as written language, speech and even code (e.g. [1]) and extract high-level semantic information. One particularly attractive application for embedded and mobile devices is the extraction of phonemes (the basic components of speech) and of words out of raw audio coming from a microphone.

For highly energy-constrained devices such as wearable devices, being able to extract such high-level information within a limited power envelope and in a fast time would be an extremely attractive feature, enabling for example smart wake-up and search of local information on a smartwatch without the need to communicate with an external, more powerful device "on the cloud". Therefore, a low-power, energy-efficient ASIC able to implement a generic deep RNN would be extremely significant with respect to this purpose.

In this project, you will work on the development of such a chip. You will be provided with a high-level golden model in Python or Matlab and you will:

- develop an application-specific architecture to implement a deep RNN, correctly implementing the functionality specified in the golden model;

- use open-source hardware IPs from the PULP project [2] to implement peripheral functionality for the chip and, if necessary, a small internal microcontroller using the PULPino MCU;

- design a chip using the deep RNN core developed in the previous points.

Status: Completed

Igor Susmelj, Gianna Paulin

- Supervisor: Francesco Conti

- Cosupervisor: Lukas Cavigelli

Prerequisites

- Familiarity with a scripting environment (Matlab and/or Python+Numpy).

- Knowledge of a hardware design language such as (System)Verilog or VHDL.

- VLSI1 and enrolment in VLSI2.

- At least one student has to test the chip as part of the VLSI3 lecture, if the ASIC will be manufactured.

Character

- 10% Theory

- 60% Architecture, HW Design & Verification

- 30% VLSI Back-end Design

Professor

Links and References

- (1) WildML, Recurrent Neural Networks Tutorial.

- (2) A. Graves, A. Mohamed and G. Hinton, Speech Recognition with Deep Recurrent Neural Networks.

- (3) A. Graves and N. Jaitly, Towards End-to-End Speech Recognition with Recurrent Neural Networks.

- (4) H. Sak, A. Senior, K. Rao, F. Beaufays, Fast and Accurate Recurrent Neural Network Acoustic Models for Speech Recognition.