Hardware Constrained Neural Architechture Search

From iis-projects

Contents

Description

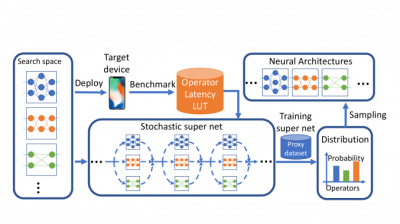

Designing good and efficient neural networks is challenging, most tasks require models to be both highly accurate and robust, as well as being compact. These models then also often have constraints on energy usage, memory consumption, and latency. This results in the search space for manual design in being combinatorially large. A method of tackling the problem of manual design is instead using a neural architecture search (NAS). Many different flavors of NAS exist, such as being differentiable or DNAS [1], NAS methods that utilize evolutionary algorithms, and NAS methods that use reinforcement learning [2]. An interesting and exciting feature of NAS is the ability to include hardware constraints or have constraints on e.g., power consumption and/or memory usage guide the search process for state-of-the-art neural networks [3].

Compact and energy-efficient machine learning models have the ability to be embedded into small microprocessors for inference. A task where having small models is especially interesting is brain-computer interface (BCI) classification. A brain-computer interface is a device that enables communication between the human brain and an external device. It aims to recognize the human’s intentions from spatiotemporal neural activity typically recorded by a large set of electroencephalogram (EEG) electrodes. What makes it particularly challenging, however, is its susceptibility to errors in the recognition of human intentions, especially during motor imagery (MI). The underlying reason is the high inter-subject variance, which makes it difficult to build one universal model for all subjects.

In this project, the student explores and tests a NAS method that has the ability to have hardware constraints to guide the search process for SoA neural networks on BCI related tasks.

Status: Completed

Student: Mark Vero

- Supervision: Thorir Mar Ingolfsson, Xiaying Wang

Prerequisites

- Machine Learning

- Python

Character

- 20% Theory

- 80% Implementation

Literature

- [1] Wu et. al., FBNet: Hardware-Aware Efficient ConvNet Design via Differentiable Neural Architecture Search

- [2] Tan et. al., MnasNet: Platform-Aware Neural Architecture Search for Mobile

- [3] Vineeth et al., Hardware Aware Neural Network Architectures (using FBNet)

Professor