MemPool on HERO (1S)

From iis-projects

Contents

Introduction

Heterogeneous systems combine a general-purpose host processor with domain-specific Programmable Many-Core Accelerators (PMCAs). Such systems are highly versatile due to their host processor capabilities while having high performance and energy efficiency through their PMCAs.

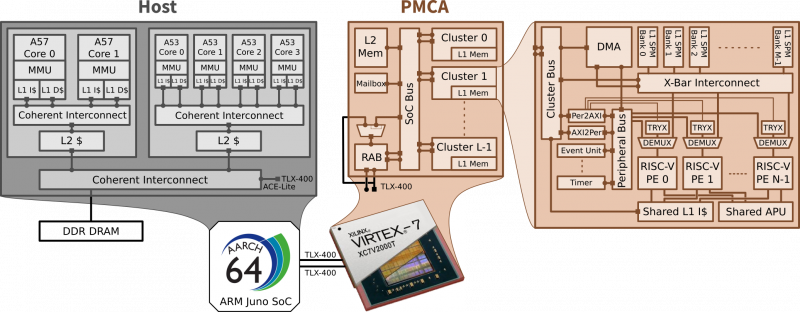

HERO is an FPGA-based research platform developed at IIS that combines a PMCA, implemented as soft cores on an FPGA fabric, with a hard ARM Cortex-A multicore host processor [1]. Figure 1 shows the block diagram of HERO. It features a shared virtual memory system between host and accelerator and provides a heterogeneous compiler toolchain with OpenMP support for acceleration; this keeps the programming model simple and portable and enables accelerating state-of-the-art software and benchmarks.HERO currently uses the Parallel Ultra-Low Power (PULP) cluster as its PMCA. The open-source PULP platform is developed at IIS [2] and provides a multicore cluster based on CV32E40P cores [3]. It is a 32-bit in-order RISC-V instruction set architecture(ISA) processor with four pipeline stages, extended with signal processing instructions. PULP is designed for high energy efficiency for embedded applications with heavy and parallelizable workloads.

Another PMCA developed at IIS is MemPool [4]. It consists of 256 lightweight 32-bit RISC-V cores developed at ETH Zurich, called Snitch [5]. In contrast to CV32E40P, Snitchcores do not implement any domain-specific instruction, making them much smaller and allowing more processors in the same area.

Project description

The goal of the project is to integrate the MemPool cluster into HERO. The project can be divided into the following milestones:

Project Milestones:

- Preparing the MemPool cluster for acting as an accelerator in HERO

MemPool currently lacks some essential features such as sleep, and wake-up behavior of the cores and an AXI interconnect to connect to control registers or L2 memory. The project’s first step will be to get the MemPool cluster ready to be connected to and controlled by a host.

- Integration with HERO

HERO already offers all the necessary hardware and software to interact with a PMCA, such as MemPool. In this step, the MemPool system will be integrated and connected to HERO’s accelerator interface.

- Getting the FGPA working

With the hardware assembled, the RTL has to be compiled to an FPGA bitstream. While HERO’s hardware is already FPGA proven, MemPool has never been mapped to an FPGA. This also involves exploring the best fitting system con-figuration of MemPool for the given FPGA.

- Running a minimal application on the FPGA

With MemPool being mapped to HERO, a minimal application example should be executed on the system to prove the concept.

- Adding a DMA to MemPool

MemPool has no DMA at the moment. To allow efficient data sharing between the accelerator and the host, a DMA has to be added to MemPool. The performance of the final system will have to be evaluated with existing benchmark kernels.

Required skills

To work on this project, you will need:

- to have worked in the past with at least one RTL language (SystemVerilog or Verilog or VHDL). Having followed the VLSI 1 course is recommended.

- to have prior knowledge of hardware design and computer architecture

- to be motivated to work hard on a super cool open-source project

Status: In progress

- Student: Joan Mihali

- Type: Semester Thesis

- Semester: Autumn Semester 2020

- Professor: Prof. Dr. L. Benini

- Supervisors:

Professor

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues.

Around the middle of the project there is a design review, where senior members of the lab review your work (bring all the relevant information, such as prelim. specifications, block diagrams, synthesis reports, testing strategy, ...) to make sure everything is on track and decide whether further support is necessary. They also make the definite decision on whether the chip is actually manufactured (no reason to worry, if the project is on track) and whether more chip area, a different package, ... is provided. For more details refer to (1).

At the end of the project, you have to present/defend your work during a 15 min. presentation and 5 min. of discussion as part of the IIS Colloquium.

References

- A. Kurth, A. Capotondi, P. Vogel, L. Benini, and A. Marongiu, “Hero: An open-source research platform for hw/sw exploration of heterogeneous manycore systems,” in ANDARE ’18. New York, NY, USA: ACM, 2018.

- F. Conti, D. Rossi, A. Pullini, I. Loi, and L. Benini, “Pulp: A ultra-low power parallel accelerator for energy-efficient and flexible embedded vision,”J. Signal Process. Syst., vol. 84, no. 3, p. 339–354, Sep. 2016.

- M. Gautschi, P. D. Schiavone, A. Traber, I. Loi, A. Pullini, D. Rossi, E. Flamand, F. K. Gürkaynak, and L. Benini, “Near-threshold RISC-V core with DSP extensions for scalable IoT endpoint devices,”IEEE Transactions on Very Large Scale Integration(VLSI) Systems, vol. 25, no. 10, pp. 2700–2713, 2017.

- M. Cavalcante and S. Riedel, MemPool GitLab Repository, 2020 (accessed August 18, 2020). [Online]. Available: https://iis-git.ee.ethz.ch/mempool/mempool

- F. Zaruba, F. Schuiki, T. Hoefler, and L. Benini, “Snitch: A 10 kGE pseudo dual-issue processor for area and energy efficient execution of floating-point intensive workloads,” 2020.