Software-Defined Paging in the Snitch Cluster (2-3S)

From iis-projects

Introduction

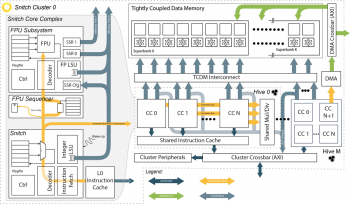

Much of our work on high-performance systems uses the Snitch cluster [1]. This multicore cluster couples tiny RISC-V Snitch cores with large double-precision FPUs and utilization-boosting extensions to maximize the area and energy spent on useful computation.

To provide its worker cores with data, the cluster includes a large-throughput DMA [2] that moves data between its tightly-coupled scratchpad and external memory. This DMA is also controlled by a Snitch core, making the cluster’s data movement fully programmable.

This paradigm opens up entirely new possibilities for efficient computation scheduling. In many applications, it simplifies the programming model by separating computation and data movement. Ideally, the worker cores are unaware of the data movement and scheduling in the DMA core, and the two only communicate through thread barriers and semaphores.

However, the worker cores must still synchronize with the DMA core when data is replaced; this is fine for most double-buffered data movement schemes, but not ideal for other applications.

Project

What we are missing in the current cluster is a paging mechanism, similar to those in virtual memory systems, which makes memory accesses to a larger memory space transparent to worker cores. With minimal hardware support for page translation, we can use our programmable DMA to implement paging in the cluster scratchpad, possibly without full virtual memory support. Depending on the DMA core code, this can enable regular data movement, caching, and even prefetching with the same hardware.

We propose to determine, using minimal hardware, whether the page requested in each access is present in the cluster's scratchpad or not. When a worker core uses paging and requests a missing page, the scratchpad blocks the access until the page is available; the necessary page replacement be done fully in software on the DMA core.

The implementation steps would be as follows:

- Design the hardware enabling paging support:

- A translation stage in the scratchpad interconnect checking page indices against a TLB-like structure.

- An minimal interface to the DMA core to signal page faults and their handling.

- Write the paging code for the DMA core:

- Experiment with page replacement approaches and determine which is most appropriate.

- Can you demonstrate good results in both reactive (i.e. caching) and proactive (i.e. prefetching) replacement scenarios?

- Evaluate your paging solution:

- Create a simple demonstrator showcasing the mechanism.

- Determine the impact on cluster area, performance, and timing.

- Optimize the hardware and software in accordance with your evaluation.

If the student(s) are interested and motivated, further aspects can be investigated, such as

- Page ownership and access mechanisms in multi-cluster systems

- Integration with the ISA-level virtual memory support in Snitch

Requirements

- A strong interest in and fundermental understanding of computer architecture

- Experience with RTL design and SystemVerilog as taught in VLSI I

- Preferred: prior experience with architecture design and SoCs

- Preferred: prior knowledge in embedded or systems programming

Composition

- 25% Architecture specification

- 25% RTL Implementation

- 25% System programming

- 25% Evaluation and demonstration