Difference between revisions of "Investigating the Cost of Special-Case Handling in Low-Precision Floating-Point Dot Product Units (1S)"

From iis-projects

Lbertaccini (talk | contribs) (Created page with "<!-- Investigating the Cost of Special-Case Handling in Low-Precision Floating-Point Dot Product Units (1S) --> Category:Digital Category:Acceleration_and_Transprecisio...") |

Lbertaccini (talk | contribs) |

||

| Line 22: | Line 22: | ||

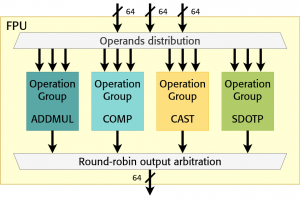

[[File:Fpu_block_diagram.png|thumb|300px|FPnew block diagram [1]. Each operation group block can be instantiated through a parameter. In the figure, the FPU was instantiated without a DivSqrt module.]] | [[File:Fpu_block_diagram.png|thumb|300px|FPnew block diagram [1]. Each operation group block can be instantiated through a parameter. In the figure, the FPU was instantiated without a DivSqrt module.]] | ||

| − | Low-precision floating-point (FP) formats are getting more and more traction in the context of neural network (NN) training. Employing low-precision formats such as 8-bit FP data | + | Low-precision floating-point (FP) formats are getting more and more traction in the context of neural network (NN) training. Employing low-precision formats, such as 8-bit FP data types, reduce the model's memory footprint and open new opportunities to increase the system's energy efficiency. |

A low-precision FP dot product unit was recently developed at IIS [1], [2]. The module computes 8 or 16-bit dot products and accumulates the result in larger precision. It has been designed following the standard IEEE-754 directives. However, supporting all the special cases can be costly in hardware and some of these special cases might be unnecessary for low-precision training. The goal of this project is to evaluate such costs. | A low-precision FP dot product unit was recently developed at IIS [1], [2]. The module computes 8 or 16-bit dot products and accumulates the result in larger precision. It has been designed following the standard IEEE-754 directives. However, supporting all the special cases can be costly in hardware and some of these special cases might be unnecessary for low-precision training. The goal of this project is to evaluate such costs. | ||

Revision as of 13:41, 7 November 2022

Contents

Overview

Status: Available

- Type: Semester Thesis

- Professor: Prof. Dr. L. Benini

- Supervisors:

Introduction

Low-precision floating-point (FP) formats are getting more and more traction in the context of neural network (NN) training. Employing low-precision formats, such as 8-bit FP data types, reduce the model's memory footprint and open new opportunities to increase the system's energy efficiency.

A low-precision FP dot product unit was recently developed at IIS [1], [2]. The module computes 8 or 16-bit dot products and accumulates the result in larger precision. It has been designed following the standard IEEE-754 directives. However, supporting all the special cases can be costly in hardware and some of these special cases might be unnecessary for low-precision training. The goal of this project is to evaluate such costs.

Project

- Investigation of the Sdotp unit and its fundamental blocks

- RTL modifications to the Sdotp unit. Support for special cases will be incrementally removed/modified, and its costs will be assessed.

Character

- 20% Literature / architecture review

- 40% RTL implementation

- 40% Evaluation

Prerequisites

- Strong interest in computer architecture

- Experience with digital design in SystemVerilog as taught in VLSI I

- Experience with ASIC implementation flow (synthesis) as taught in VLSI II

References

[1] https://arxiv.org/abs/2207.03192 MiniFloat-NN and ExSdotp: An ISA Extension and a Modular Open Hardware Unit for Low-Precision Training on RISC-V cores

[2] https://github.com/openhwgroup/cvfpu/tree/feature/expanding_dotp