Difference between revisions of "Approximate Matrix Multiplication based Hardware Accelerator to achieve the next 10x in Energy Efficiency: Full System Intregration"

From iis-projects

(Created page with "<!-- Approximate Matrix Multiplication based Hardware Accelerator to achieve the next 10x in Energy Efficiency: Full System Integration (2S,1M) --> Category:Digital Cat...") |

|||

| (18 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

<!-- Approximate Matrix Multiplication based Hardware Accelerator to achieve the next 10x in Energy Efficiency: Full System Integration (2S,1M) --> | <!-- Approximate Matrix Multiplication based Hardware Accelerator to achieve the next 10x in Energy Efficiency: Full System Integration (2S,1M) --> | ||

| − | + | ||

| − | [[Category: | + | [[Category:Acceleration and Transprecision]] |

[[Category:High Performance SoCs]] | [[Category:High Performance SoCs]] | ||

[[Category:Computer Architecture]] | [[Category:Computer Architecture]] | ||

| + | [[Category:Deep Learning Acceleration]] | ||

| + | [[Category:Deep Learning Projects]] | ||

| + | [[Category:Available]] | ||

| + | [[Category:Digital]] | ||

[[Category:2022]] | [[Category:2022]] | ||

| + | |||

[[Category:Semester Thesis]] | [[Category:Semester Thesis]] | ||

[[Category:Master Thesis]] | [[Category:Master Thesis]] | ||

[[Category:Available]] | [[Category:Available]] | ||

| + | [[Category:Janniss]] | ||

| + | [[User:Janniss]] | ||

= Overview = | = Overview = | ||

| + | |||

| + | == PDF of project == | ||

| + | |||

| + | [https://iis-people.ee.ethz.ch/~janniss/projects/Maddness_system_integration.pdf Maddness System Intergration] | ||

| + | |||

| + | == Git Repository of project == | ||

| + | |||

| + | [https://github.com/joennlae/halutmatmul Github] | ||

== Status: Available == | == Status: Available == | ||

| Line 17: | Line 32: | ||

* Professor: Prof. Dr. L. Benini | * Professor: Prof. Dr. L. Benini | ||

* Supervisors: | * Supervisors: | ||

| − | ** Jannis Schönleber: [mailto:janniss@ethz.ch janniss@ethz.ch] | + | ** Jannis Schönleber: [mailto:janniss@iis.ee.ethz.ch janniss@iis.ee.ethz.ch] |

| − | ** Lukas Cavigelli (Huawei) | + | * External Advisors: |

| − | ** Renzo Andri (Huawei) | + | ** Dr. Lukas Cavigelli (Huawei Research Zurich) |

| + | ** Dr. Renzo Andri (Huawei Research Zurich) | ||

= Introduction = | = Introduction = | ||

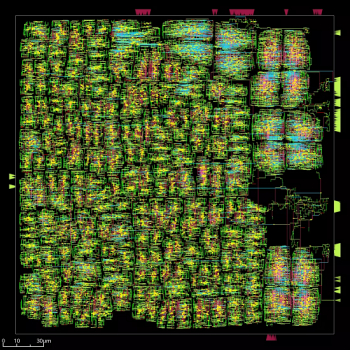

| − | The continued growth in DNN model parameter count, application domains and general adoption led to an explosion of the needed computing power and energy. Especially the energy needs have become large enough to be economically unviable or extremely difficult to cool down. That led to a push for more energy-efficient solutions. Energy efficient accelerator solutions have a long tradition in IIS, with | + | [[File:maddness_floorplan.png|thumb|350px|Floorplan or the Maddness Accelerator.]] |

| − | + | The continued growth in DNN model parameter count, application domains, and general adoption led to an explosion of the needed computing power and energy. Especially the energy needs have become large enough to be economically unviable or extremely difficult to cool down. That led to a push for more energy-efficient solutions. Energy-efficient accelerator solutions have a long tradition in IIS, with many proven accelerators published in the past. Standard accelerator architectures try to increase throughput via higher memory bandwidth, improved memory hierarchy, or reduced precision (FP16, INT8, INT4). The approach of the accelerator used in the project is a different one. It uses an approximate matrix multiplication (AMM) algorithm called MADDness, which replaces the matrix multiplication with a lookup into a look-up-table (LUT) and an addition. That can significantly reduce the overall computing and energy needs. | |

| − | |||

= Project Details = | = Project Details = | ||

| − | The MADDness algorithm is split into two parts. | + | The MADDness algorithm is split into two parts. First, we have an encoding part, which translates the input matrix A into the addresses of the LUT. After the translation follows a decoding part that adds the corresponding LUT values together to calculate the approximate output of the matrix multiplication. MADDness is then integrated into deep neural networks. The most commonly seen layers in DNNs are convolutional layers and linear layers, MADDness can replace both. The current RTL implementation is fully post-simulation tested and includes both the encoder and decoder unit. Additionally, a post-layout simulation-based energy estimation has been done. However, the accelerator is not yet integrated into a complete system. |

Energy estimates with the current implementation using GF 22nm FDX technology suggest an energy efficiency of up to 32 TMACs/W compared to a state-of-the-art datacenter NVIDIA A100 (TSMC 7nm FinFET) at around 0.7 TMACs/W (FP16). | Energy estimates with the current implementation using GF 22nm FDX technology suggest an energy efficiency of up to 32 TMACs/W compared to a state-of-the-art datacenter NVIDIA A100 (TSMC 7nm FinFET) at around 0.7 TMACs/W (FP16). | ||

| − | In this project, we | + | In this project, we want to integrate the accelerator into a complete system. The aim at the end would be to have a tape-out-ready complete system that includes the MADDness accelerator. A full design consists of a suitable memory hierarchy to support the bandwidth needs. In addition, we envision integrating one of the existing PULP systems (for example, PULP clusters or ARA). The evaluation of which system suits the accelerator the best and defining the final architecture is part of the thesis. |

More information can be found here: | More information can be found here: | ||

* Code: https://github.com/joennlae/halutmatmul | * Code: https://github.com/joennlae/halutmatmul | ||

* Reference Paper: https://arxiv.org/abs/2106.10860 | * Reference Paper: https://arxiv.org/abs/2106.10860 | ||

* HN discussion: https://news.ycombinator.com/item?id=28375096 | * HN discussion: https://news.ycombinator.com/item?id=28375096 | ||

| − | * and please do not hesitate to reach out to me: janniss@ethz.ch | + | * and please do not hesitate to reach out to me: janniss@iis.ee.ethz.ch |

= Project Plan = | = Project Plan = | ||

| − | Acquire background knowledge & familiarize with the project | + | Acquire background knowledge & familiarize yourself with the project |

* Read up on the MADDness algorithm and product quantization methods | * Read up on the MADDness algorithm and product quantization methods | ||

* Familiarize yourself with the current state of the project | * Familiarize yourself with the current state of the project | ||

| − | * Familiarize with the IIS | + | * Familiarize with the IIS computing environment |

Setup the project & rerun RTL simulations | Setup the project & rerun RTL simulations | ||

* Setup the project with all its dependencies | * Setup the project with all its dependencies | ||

| Line 47: | Line 62: | ||

Evaluate suitable systems to integrate and refine an architecture | Evaluate suitable systems to integrate and refine an architecture | ||

* Define bandwidth needs and brainstorm suitable memory hierarchies | * Define bandwidth needs and brainstorm suitable memory hierarchies | ||

| − | * Spreadsheet based evaluation of the different target systems like PULP clusters, ARA etc. this includes exploring different configurations and estimated size of the chip | + | * Spreadsheet-based evaluation of the different target systems like PULP clusters, ARA, etc. this includes exploring different configurations and estimated size of the chip |

| − | * Decide on a final architecture that we will pursue | + | * Decide on a final architecture that we will pursue the remainder of the project |

Integrate the accelerator into the defined architecture | Integrate the accelerator into the defined architecture | ||

| − | * Implementing the integration in SystemVerilog & add | + | * Implementing the integration in SystemVerilog & add test benches |

* Replace the currently used standard cell memories with compiled memories | * Replace the currently used standard cell memories with compiled memories | ||

Setup the design flow | Setup the design flow | ||

| Line 60: | Line 75: | ||

* The goal is to have everything ready for a design review: http://eda.ee.ethz.ch/index.php?title=Design_review (ETH domain) | * The goal is to have everything ready for a design review: http://eda.ee.ethz.ch/index.php?title=Design_review (ETH domain) | ||

Project finalization | Project finalization | ||

| − | * Prepare final report | + | * Prepare a final report |

* Prepare project presentation | * Prepare project presentation | ||

* Clean up code | * Clean up code | ||

| Line 67: | Line 82: | ||

== Character == | == Character == | ||

| − | * 15% Literature / architecture review | + | * 15% Literature/architecture review |

* 15% Design Evaluation | * 15% Design Evaluation | ||

| − | * 30% RTL implementation | + | * 30% RTL implementation (SystemVerilog) |

| − | * | + | * 10% low-level software implementation (C) |

| + | * 30% ASIC tape-out preparation | ||

== Prerequisites == | == Prerequisites == | ||

| Line 77: | Line 93: | ||

* Experience with digital design in SystemVerilog as taught in VLSI I | * Experience with digital design in SystemVerilog as taught in VLSI I | ||

* Experience with ASIC implementation flow (synthesis) as taught in VLSI II | * Experience with ASIC implementation flow (synthesis) as taught in VLSI II | ||

| + | * Lite experience with C or comparable language for low-level SW glue code | ||

| + | |||

| + | If you want to work on this project but think you do not match some of the required skills, please contact us, and we can provide preliminary exercises to help you fill in the gap. | ||

===Status: Available === | ===Status: Available === | ||

Latest revision as of 16:50, 3 November 2022

Contents

Overview

PDF of project

Git Repository of project

Status: Available

- Type: Semester Thesis (2 students), Master Thesis (1 student)

- Professor: Prof. Dr. L. Benini

- Supervisors:

- Jannis Schönleber: janniss@iis.ee.ethz.ch

- External Advisors:

- Dr. Lukas Cavigelli (Huawei Research Zurich)

- Dr. Renzo Andri (Huawei Research Zurich)

Introduction

The continued growth in DNN model parameter count, application domains, and general adoption led to an explosion of the needed computing power and energy. Especially the energy needs have become large enough to be economically unviable or extremely difficult to cool down. That led to a push for more energy-efficient solutions. Energy-efficient accelerator solutions have a long tradition in IIS, with many proven accelerators published in the past. Standard accelerator architectures try to increase throughput via higher memory bandwidth, improved memory hierarchy, or reduced precision (FP16, INT8, INT4). The approach of the accelerator used in the project is a different one. It uses an approximate matrix multiplication (AMM) algorithm called MADDness, which replaces the matrix multiplication with a lookup into a look-up-table (LUT) and an addition. That can significantly reduce the overall computing and energy needs.

Project Details

The MADDness algorithm is split into two parts. First, we have an encoding part, which translates the input matrix A into the addresses of the LUT. After the translation follows a decoding part that adds the corresponding LUT values together to calculate the approximate output of the matrix multiplication. MADDness is then integrated into deep neural networks. The most commonly seen layers in DNNs are convolutional layers and linear layers, MADDness can replace both. The current RTL implementation is fully post-simulation tested and includes both the encoder and decoder unit. Additionally, a post-layout simulation-based energy estimation has been done. However, the accelerator is not yet integrated into a complete system. Energy estimates with the current implementation using GF 22nm FDX technology suggest an energy efficiency of up to 32 TMACs/W compared to a state-of-the-art datacenter NVIDIA A100 (TSMC 7nm FinFET) at around 0.7 TMACs/W (FP16). In this project, we want to integrate the accelerator into a complete system. The aim at the end would be to have a tape-out-ready complete system that includes the MADDness accelerator. A full design consists of a suitable memory hierarchy to support the bandwidth needs. In addition, we envision integrating one of the existing PULP systems (for example, PULP clusters or ARA). The evaluation of which system suits the accelerator the best and defining the final architecture is part of the thesis. More information can be found here:

- Code: https://github.com/joennlae/halutmatmul

- Reference Paper: https://arxiv.org/abs/2106.10860

- HN discussion: https://news.ycombinator.com/item?id=28375096

- and please do not hesitate to reach out to me: janniss@iis.ee.ethz.ch

Project Plan

Acquire background knowledge & familiarize yourself with the project

- Read up on the MADDness algorithm and product quantization methods

- Familiarize yourself with the current state of the project

- Familiarize with the IIS computing environment

Setup the project & rerun RTL simulations

- Setup the project with all its dependencies

- Try to rerun the current RTL simulations

Evaluate suitable systems to integrate and refine an architecture

- Define bandwidth needs and brainstorm suitable memory hierarchies

- Spreadsheet-based evaluation of the different target systems like PULP clusters, ARA, etc. this includes exploring different configurations and estimated size of the chip

- Decide on a final architecture that we will pursue the remainder of the project

Integrate the accelerator into the defined architecture

- Implementing the integration in SystemVerilog & add test benches

- Replace the currently used standard cell memories with compiled memories

Setup the design flow

- Setup and integrate the (most likely tsmc65) design flow into the project

Synthesize + Place-and-Route & make design tape-out ready

- Synthesize + Place-and-Route the design

- Get a working post-layout simulation

- Place macros & power routing, IR drop checks

- The goal is to have everything ready for a design review: http://eda.ee.ethz.ch/index.php?title=Design_review (ETH domain)

Project finalization

- Prepare a final report

- Prepare project presentation

- Clean up code

Character

- 15% Literature/architecture review

- 15% Design Evaluation

- 30% RTL implementation (SystemVerilog)

- 10% low-level software implementation (C)

- 30% ASIC tape-out preparation

Prerequisites

- Strong interest in computer architecture

- Experience with digital design in SystemVerilog as taught in VLSI I

- Experience with ASIC implementation flow (synthesis) as taught in VLSI II

- Lite experience with C or comparable language for low-level SW glue code

If you want to work on this project but think you do not match some of the required skills, please contact us, and we can provide preliminary exercises to help you fill in the gap.