Difference between revisions of "Autonomous Obstacle Avoidance with Nano-Drones and Novel Depth Sensors"

From iis-projects

(Created page with "<!-- Creating Autonomous Obstacle Avoidance with Nano-Drones and Novel Depth Sensors --> Category:Vladn Category:UAV Category:UWB Category:Digital Catego...") |

(→Project Description) |

||

| (53 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

<!-- Creating Autonomous Obstacle Avoidance with Nano-Drones and Novel Depth Sensors --> | <!-- Creating Autonomous Obstacle Avoidance with Nano-Drones and Novel Depth Sensors --> | ||

| − | |||

[[Category:UAV]] | [[Category:UAV]] | ||

| − | |||

[[Category:Digital]] | [[Category:Digital]] | ||

[[Category:2022]] | [[Category:2022]] | ||

| Line 9: | Line 7: | ||

[[Category:Semester Thesis]] | [[Category:Semester Thesis]] | ||

[[Category:Available]] | [[Category:Available]] | ||

| − | + | [[Category:Vladn]] | |

| − | |||

== Status: Available == | == Status: Available == | ||

| Line 18: | Line 15: | ||

* Supervisors: | * Supervisors: | ||

** [[:User:Vladn | Vlad Niculescu]]: [mailto:vladn@iis.ee.ethz.ch vladn@iis.ee.ethz.ch] | ** [[:User:Vladn | Vlad Niculescu]]: [mailto:vladn@iis.ee.ethz.ch vladn@iis.ee.ethz.ch] | ||

| + | ** [[:User:hanmuell | Hanna Müller]]: [mailto:hanmuell@iis.ee.ethz.ch hanmuell@iis.ee.ethz.ch] | ||

== Project Description == | == Project Description == | ||

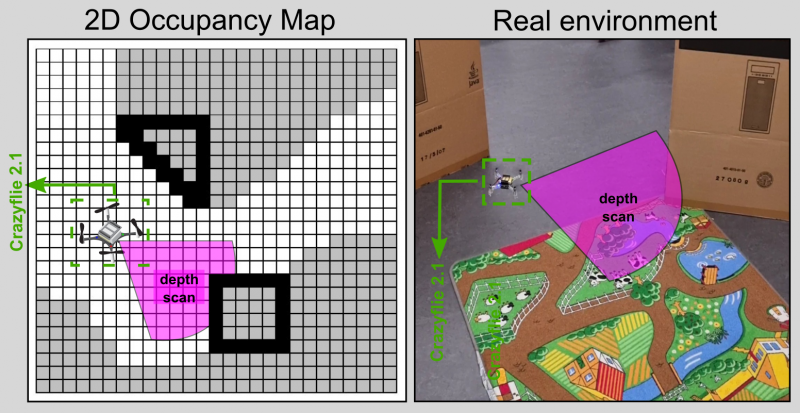

| + | [[File:occupancy-map.png|thumb|right|800px| Mapping the environment using the novel ToF matrix sensor (VL53L5CX). Figure adapted from [6]]] | ||

| + | |||

| + | One reason for the high research interest in the field of UAVs is their potential to autonomously navigate indoors while avoiding obstacles. Performing this task includes several challenges, such as online perception, control, trajectory optimization, and localization. The small form-factor category represents an even more promising class of UAVs. The UAVs in this class (i.e., nano-UAVs) measure a few centimeters in size and weigh a few tens of grams. They are considered the ideal candidates for navigating in very narrow indoor areas for monitoring and inspection purposes. | ||

| + | |||

| + | Vision-based perception algorithms used routinely on standard-size drones are based on simultaneous localization and mapping (SLAM) – a perception technique that builds a 3D local map of the environment – or end-to-end convolutional neural networks (CNNs). However, due to the large number of pixels typically associated with images, this approach still requires a large number of computations per frame. | ||

| + | |||

| + | However, novel sensors provide an alternative to vision-based solutions, such as the VL53L5CX from STMicroelectronics, a miniaturized, and lightweight optical multi-zone time-of-flight sensor targeted for indoor perception and autonomous navigation purposes. The VL53L5CX features a matrix of 8x8 ToF elements in a compact integrated solution that represents a negligible payload even for a nano-drone. Indeed, the nature | ||

| + | of navigation algorithms intrinsically requires extracting the depth, which is directly provided by the optical sensor. Due to this fact, obstacle avoidance and navigation can be performed with a reduced number of pixels (i.e., 64 pixels). | ||

| + | |||

| + | Your goal is to develop an effective and robust obstacle avoidance algorithm that runs entirely on-board. The algorithm will acquire and interpret the depth frames and it will steer the drone accordingly so that it does not collide with the obstacles. Furthermore, to further extend the capabilities of the system, you will have to implement a path planning solution that optimally drives the drone to a target point based on a local occupancy map that the drone can construct using the depth information. | ||

| + | |||

| + | A preliminary version of such an algorithm has already been implemented. | ||

| − | + | Video 1: https://www.youtube.com/watch?v=cU40pqu24bw | |

| − | |||

| − | + | Video 2: https://youtu.be/mZQEHMGTZW8 | |

| − | |||

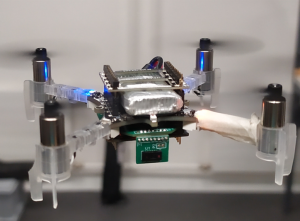

| − | [[File: | + | [[File:dronepic.png|thumb|center|300|The Crazyflie 2.1 featuring our custom deck based on the multi-zone ToF sensor]] |

== Character == | == Character == | ||

| − | * | + | * 30% Literature and algorithm development |

| − | * 30% | + | * 30% FreeRTOS C programming (STM32 Platform) |

| − | * | + | * 40% In-field evaluation and testing |

| − | |||

== Prerequisites == | == Prerequisites == | ||

| Line 42: | Line 49: | ||

= References = | = References = | ||

| − | [1] | + | * [1] V. Niculescu et al., "Improving Autonomous Nano-drones Performance via Automated End-to-End Optimization and Deployment of DNNs," in IEEE Journal on Emerging and Selected Topics in Circuits and Systems https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=9606685 |

| + | * [2] K. McGuire et al., "A comparative study of bug algorithms for robot navigation," in Robotics and Autonomous Systems. https://www.sciencedirect.com/science/article/pii/S0921889018306687 | ||

| + | * [3] STM time-of-flight (ToF) matrix https://www.st.com/en/imaging-and-photonics-solutions/vl53l5cx.html | ||

| + | * [4] Bitcraze Crazyflie 2.1 https://www.bitcraze.io/products/crazyflie-2-1/ | ||

| + | * [5] PULP Project http://iis-projects.ee.ethz.ch/index.php/PULP | ||

| + | * [6] Mojtahedzadeh et al. "Robot obstacle avoidance using the Kinect." Master of Science Thesis Stockholm, Sweden (2011). | ||

Latest revision as of 14:07, 10 March 2022

Status: Available

- Type: Master Thesis, Semester Project

- Professor: Prof. Dr. L. Benini

- Supervisors:

Project Description

One reason for the high research interest in the field of UAVs is their potential to autonomously navigate indoors while avoiding obstacles. Performing this task includes several challenges, such as online perception, control, trajectory optimization, and localization. The small form-factor category represents an even more promising class of UAVs. The UAVs in this class (i.e., nano-UAVs) measure a few centimeters in size and weigh a few tens of grams. They are considered the ideal candidates for navigating in very narrow indoor areas for monitoring and inspection purposes.

Vision-based perception algorithms used routinely on standard-size drones are based on simultaneous localization and mapping (SLAM) – a perception technique that builds a 3D local map of the environment – or end-to-end convolutional neural networks (CNNs). However, due to the large number of pixels typically associated with images, this approach still requires a large number of computations per frame.

However, novel sensors provide an alternative to vision-based solutions, such as the VL53L5CX from STMicroelectronics, a miniaturized, and lightweight optical multi-zone time-of-flight sensor targeted for indoor perception and autonomous navigation purposes. The VL53L5CX features a matrix of 8x8 ToF elements in a compact integrated solution that represents a negligible payload even for a nano-drone. Indeed, the nature of navigation algorithms intrinsically requires extracting the depth, which is directly provided by the optical sensor. Due to this fact, obstacle avoidance and navigation can be performed with a reduced number of pixels (i.e., 64 pixels).

Your goal is to develop an effective and robust obstacle avoidance algorithm that runs entirely on-board. The algorithm will acquire and interpret the depth frames and it will steer the drone accordingly so that it does not collide with the obstacles. Furthermore, to further extend the capabilities of the system, you will have to implement a path planning solution that optimally drives the drone to a target point based on a local occupancy map that the drone can construct using the depth information.

A preliminary version of such an algorithm has already been implemented.

Video 1: https://www.youtube.com/watch?v=cU40pqu24bw

Video 2: https://youtu.be/mZQEHMGTZW8

Character

- 30% Literature and algorithm development

- 30% FreeRTOS C programming (STM32 Platform)

- 40% In-field evaluation and testing

Prerequisites

- Strong interest in embedded systems

- Experience with data acquisition and analysis

- Experience with low-level C programming

References

- [1] V. Niculescu et al., "Improving Autonomous Nano-drones Performance via Automated End-to-End Optimization and Deployment of DNNs," in IEEE Journal on Emerging and Selected Topics in Circuits and Systems https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=9606685

- [2] K. McGuire et al., "A comparative study of bug algorithms for robot navigation," in Robotics and Autonomous Systems. https://www.sciencedirect.com/science/article/pii/S0921889018306687

- [3] STM time-of-flight (ToF) matrix https://www.st.com/en/imaging-and-photonics-solutions/vl53l5cx.html

- [4] Bitcraze Crazyflie 2.1 https://www.bitcraze.io/products/crazyflie-2-1/

- [5] PULP Project http://iis-projects.ee.ethz.ch/index.php/PULP

- [6] Mojtahedzadeh et al. "Robot obstacle avoidance using the Kinect." Master of Science Thesis Stockholm, Sweden (2011).