Difference between revisions of "Event-Driven Convolutional Neural Network Modular Accelerator"

From iis-projects

(→Project description) |

m (Adimauro moved page Modular Spiking Neural Network Accelerator to Event-Driven Convolutional Neural Network Modular Accelerator) |

(No difference)

| |

Revision as of 09:36, 5 August 2020

Contents

Introduction

In the last 10 years, Artificial Neural Networks (ANN) revolutionized many scientific fields by solving very difficult practical problems. Convolutional Neural Networks (CNN) is a very popular approach which allowed to reach state of the art accuracies in many different machine learning tasks, featuring a reasonably low memory footprint. For this reason, CNN are nowadays decently served by specialized CPU and GPU architectures, reaching very high energy efficiency per inference, and being deployed also to embedded devices for edge computing. In the last years, a new categories of efficient sensors is meeting a growing interest; ULP event-based cameras and audio sensors belong to this category. To efficiently exploit the nature of the data produced by such sensors, a paradigm shift in the way data are acquired and processed could be unavoidable. For this reason, research communities started focusing their interests on less conventional computing paradigms, such as event-driven computing. Spiking Neural Networks (SNN) represents a promising approach, and seems to go into the direction of a higher Sensor Activity to Computing Energy proportionality, which could bring significant advantages to many edge applications in terms of energy consumption, thereby battery lifetime.

Project description

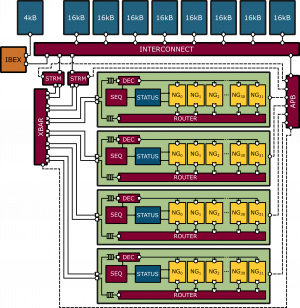

In this project we propose the design of a digital hardware accelerator for Event-Driven Convolutional Neural Networks (ED-CNNs). The accelerator have to be configurable in the type of neuron model to use, and in the number of physical computing engines instantiated. The accelerator operations are orchestrated by an RISCV core programmed through a JTAG interface. This project ultimately aims to design a fully autonomous SoC capable to collect data from external sensors (ULP event-camera) and process them on-chip.

The student is required to:

1. learn how the existing neural engine works

2. build a modular infrastructure that is capable to instantiate a parametric number of engines

3. integrate a DMA subsystem for intra-engine and IO event communication.

4. test and assess system level functionality, and provide post layout power/performance estimations (possibility to target a TSMC65 tapeout).

Status: Available

- Supervision: Alfio Di Mauro

Professor

Literature

[1] M. Davies et al., "Loihi: A Neuromorphic Manycore Processor with On-Chip Learning," in IEEE Micro, vol. 38, no. 1, pp. 82-99, January/February 2018. doi: 10.1109/MM.2018.112130359

Required Skills

To work on this project, you will need:

- to have prior knowledge of digital circuit design (VLSI1)

Other skills that you might find useful include:

- familiarity with a scripting language

- to be strongly motivated for a super-cool project

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues.

Around the middle of the project there is a design review, where senior members of the lab review your work (bring all the relevant information, such as prelim. specifications, block diagrams, synthesis reports, testing strategy, ...) to make sure everything is on track and decide whether further support is necessary. They also make the definite decision on whether the chip is actually manufactured (no reason to worry, if the project is on track) and whether more chip area, a different package, ... is provided. For more details confer to [1].

At the end of the project, you have to present/defend your work during a 15 min. presentation and 5 min. of discussion as part of the IIS colloquium.