FPGA System Design for Computer Vision with Convolutional Neural Networks

From iis-projects

Contents

Description

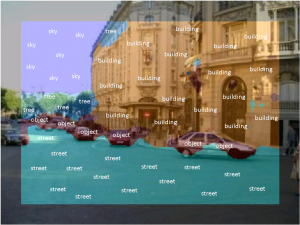

Imaging sensor networks, UAVs, smartphones, and other embedded computer vision systems require power-efficient, low-cost and high-speed implementations of synthetic vision systems capable of recognizing and classifying objects in a scene. Many popular algorithms in this area require the evaluations of multiple layers of filter banks. Almost all state-of-the-art synthetic vision systems are based on features extracted using multi-layer convolutional networks (ConvNets), nowadays even outperfoming humans on object classification tasks [1,2].

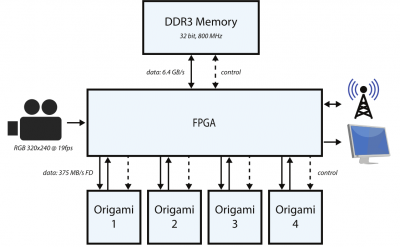

While we have successfully explored accelerating the convolution step taking up 80% to 90% of the overall compute time with a small accelerator ASIC ("Origami") [3], we would like to build a more complete system on a FPGA and further improve the accelerator. This is where you come in. We have several aspects which we would like to explore: porting the Origami accelerator to run efficiently on the FPGA, hardware/software-co-design configuring memory and DMA controllers, building small IP cores to finish the processing pipeline (activation, pooling), and completing the system by connecting a camera or loading a video stream and displaying the results.

Status: Completed

- Looking for (1 or 2) Master or (1 to 3) semester project students (work load will be adjusted)

- Contact/Supervision: Lukas Cavigelli

Prerequisites

- VLSI 1 lecture (or otherwise basic knowledge of VLSI design)

- Motivation for FPGA design and computer vision.

Character

- 80%-90% FPGA design

- 10%-20% Theory

Professor

References

- Imagenet Large Scale Visual Recognition Challenge 2015. link

- K. He, X. Zhang, S. Ren, J. Sun, “Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification,” arXiv:1502.01852, 2015. link

- L. Cavigelli, D. Gschwend, Ch. Mayer, S. Willi, B. Muheim, L. Benini, “Origami: A Convolutional Network Accelerator,” in Proceedings of the 25th Edition on Great Lakes Symposium on VLSI, 2015. link