Difference between revisions of "Feature Extraction for Speech Recognition (1S)"

From iis-projects

(→Status: Completed) |

|||

| (6 intermediate revisions by the same user not shown) | |||

| Line 3: | Line 3: | ||

= Overview = | = Overview = | ||

| − | == Status: | + | == Status: Completed == |

* Type: Semester Thesis | * Type: Semester Thesis | ||

* Professor: Prof. Dr. L. Benini | * Professor: Prof. Dr. L. Benini | ||

* Supervisors: | * Supervisors: | ||

| − | ** Cristian Cioflan (IIS): [mailto:cioflanc@iis.ee.ethz.ch cioflanc@iis.ee.ethz.ch] | + | ** [[:User:Cioflanc| Cristian Cioflan]] (IIS): [mailto:cioflanc@iis.ee.ethz.ch cioflanc@iis.ee.ethz.ch] |

** Dr. Lukas Cavigelli (Huawei Technologies): [mailto:lukas.cavigelli@huawei.com lukas.cavigelli@huawei.com] | ** Dr. Lukas Cavigelli (Huawei Technologies): [mailto:lukas.cavigelli@huawei.com lukas.cavigelli@huawei.com] | ||

| Line 19: | Line 19: | ||

[[Category:Hot]] | [[Category:Hot]] | ||

[[Category:Deep Learning Projects]] | [[Category:Deep Learning Projects]] | ||

| + | [[Category:Completed]] | ||

[[Category:Digital]] | [[Category:Digital]] | ||

[[Category:Cioflanc]] | [[Category:Cioflanc]] | ||

| − | |||

= Short Description = | = Short Description = | ||

| Line 31: | Line 31: | ||

Feature extraction represents the process of deriving essential, non-redundant information from a set of measured data. Through the selection and/or combination of input variables into features, the dimension of the given data can be largely reduced, thus also reducing the computational effort within the processing pipeline. Nonetheless, such techniques should not affect the integrity of the data; the extracted features should still accurately described the data set. Often used interchangeably, we differentiate between feature (pre)processing and feature extraction, defining the former as the techniques applied to alter the data in order to emphasize or remove certain characteristics. To better understand the concept of feature extraction, we will use the Mel-Frequency Cepstral Coefficients (MFCC) as an example. | Feature extraction represents the process of deriving essential, non-redundant information from a set of measured data. Through the selection and/or combination of input variables into features, the dimension of the given data can be largely reduced, thus also reducing the computational effort within the processing pipeline. Nonetheless, such techniques should not affect the integrity of the data; the extracted features should still accurately described the data set. Often used interchangeably, we differentiate between feature (pre)processing and feature extraction, defining the former as the techniques applied to alter the data in order to emphasize or remove certain characteristics. To better understand the concept of feature extraction, we will use the Mel-Frequency Cepstral Coefficients (MFCC) as an example. | ||

| − | Extracted from an audio signal received as input by the system, the MFCCs are one of the current standards in KWS [2 | + | Extracted from an audio signal received as input by the system, the MFCCs are one of the current standards in KWS [[#ref-berg2021|[2]]] [[#ref-majumdar2020|[6]]]. They are a cepstral representation of the signal, but, compared to the simple cepstrum, the MFCC use equally spaced frequency bands, based on the Mel scale. This leads to a closer representation of the signal to the actual response of the human auditory system. The derivation techniques are described in detail in [[#ref-lyonmfcc|[5]]]; we will enumerate the main steps of [https://pytorch.org/audio/stable/_modules/torchaudio/transforms.html#MFCC PyTorch’s] MFCC computation: |

<ul> | <ul> | ||

| Line 44: | Line 44: | ||

Feature extraction is often used in Machine Learning settings, and especially in the con-text of Deep Neural Networks (DNN), due to the fact that reducing the feature dimensionality further reduces the computational load, as well as the storage requirements, of such a DNN. Therefore, by pre-determining the relevant features from the input data using human expertise, the model is alleviated from the tedious task of identifying and filtering redundant information. A deep learning pipeline for KWS integrating MFCC is presented in Figure 1. | Feature extraction is often used in Machine Learning settings, and especially in the con-text of Deep Neural Networks (DNN), due to the fact that reducing the feature dimensionality further reduces the computational load, as well as the storage requirements, of such a DNN. Therefore, by pre-determining the relevant features from the input data using human expertise, the model is alleviated from the tedious task of identifying and filtering redundant information. A deep learning pipeline for KWS integrating MFCC is presented in Figure 1. | ||

| − | In the recent years, it was shown that state-of-the-art results can be achieved not only by using the MFCCs, but also by employing the results of an intermediate step, such as the MelSpectrogram [9] or the LogMelSpectrogram [4]. Moreover, surveys [1 | + | <div class="center"> |

| + | [[File:KWS.png|thumb|600px]] | ||

| + | </div> | ||

| + | |||

| + | In the recent years, it was shown that state-of-the-art results can be achieved not only by using the MFCCs, but also by employing the results of an intermediate step, such as the MelSpectrogram [[#ref-vygon2021|[9]]] or the LogMelSpectrogram [[#ref-kim2021|[4]]]. Moreover, surveys [[#ref-alim2018|[1]]] [[#ref-sharma2020|[7]]] [[#ref-choi2021|[3]]] [[#ref-shrawankar2013|[8]]] on the topic present alternative strategies in performing feature extraction for audio-based ML tasks. Nevertheless, there are no such works, to the best of our knowledge, presenting a meta-analysis of the different extraction techniques and their impact in the scope of KWS. As Choi et al. [[#ref-choi2021|[3]]] have shown, the choice of the feature extraction mechanism has a significant impact in the context of music tagging, so it is only natural to assume that a similar conclusion would be drawn when performing a comparative evaluation for a keyword spotting system. | ||

| Line 104: | Line 108: | ||

<div id="refs" class="references csl-bib-body"> | <div id="refs" class="references csl-bib-body"> | ||

<div id="ref-alim2018" class="csl-entry"> | <div id="ref-alim2018" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[1] </span><span class="csl-right-inline">Sabur Ajibola Alim and Nahrul Khair Alang Rashid<span> | + | <span class="csl-left-margin">[1] </span><span class="csl-right-inline">Sabur Ajibola Alim and Nahrul Khair Alang Rashid. <span><span class="nocase">Some commonly used speech feature extraction algorithms</span>. </span> 2018.</span> |

</div> | </div> | ||

<div id="ref-berg2021" class="csl-entry"> | <div id="ref-berg2021" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[2] </span><span class="csl-right-inline">Axel Berg, Mark O’Connor, and Miguel Tairum Cruz<span> | + | <span class="csl-left-margin">[2] </span><span class="csl-right-inline">Axel Berg, Mark O’Connor, and Miguel Tairum Cruz. <span><span class="nocase">Keyword transformer: A self-attention model for keyword spotting. </span></span> 2021.</span> |

</div> | </div> | ||

<div id="ref-choi2021" class="csl-entry"> | <div id="ref-choi2021" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[3] </span><span class="csl-right-inline">Keunwoo Choi, György Fazekas, Kyunghyun Cho, and Mark Sandler.<span>A comparison of audio signal preprocessing methods for deep neural networks on music tagging</span>2021</span> | + | <span class="csl-left-margin">[3] </span><span class="csl-right-inline">Keunwoo Choi, György Fazekas, Kyunghyun Cho, and Mark Sandler. <span>A comparison of audio signal preprocessing methods for deep neural networks on music tagging. </span>2021. </span> |

</div> | </div> | ||

<div id="ref-kim2021" class="csl-entry"> | <div id="ref-kim2021" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[4] </span><span class="csl-right-inline">Byeonggeun Kim, Simyung Chang, Jinkyu Lee, and Dooyong Sung<span>Broadcasted residual learning for efficient keyword spotting</span> 2021</span> | + | <span class="csl-left-margin">[4] </span><span class="csl-right-inline">Byeonggeun Kim, Simyung Chang, Jinkyu Lee, and Dooyong Sung. <span>Broadcasted residual learning for efficient keyword spotting. </span>2021.</span> |

</div> | </div> | ||

<div id="ref-lyonmfcc" class="csl-entry"> | <div id="ref-lyonmfcc" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[5] </span><span class="csl-right-inline">James Lyon <span>“<span class="nocase">Mel Frequency Cepstral Coefficient (MFCC) tutorial.</span> | + | <span class="csl-left-margin">[5] </span><span class="csl-right-inline">James Lyon. <span>“<span class="nocase">Mel Frequency Cepstral Coefficient (MFCC) tutorial. </span></span></span> |

</div> | </div> | ||

<div id="ref-majumdar2020" class="csl-entry"> | <div id="ref-majumdar2020" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[ | + | <span class="csl-left-margin">[6] </span><span class="csl-right-inline">S. Majumdar and B. Ginsburg. <span><span class="nocase">MatchboxNet: 1D Time-Channel Separable Convolutional Neural Network Architecture for Speech Commands Recognition. </span></span> in ''Proc. Interspeech 2020'', 2020, pp. 3356–3360.</span> |

</div> | </div> | ||

<div id="ref-sharma2020" class="csl-entry"> | <div id="ref-sharma2020" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[ | + | <span class="csl-left-margin">[7] </span><span class="csl-right-inline">Garima Sharma, Kartikeyan Umapathy, and Sridhar Krishnan. <span> Trends in audio signal feature extraction methods. </span>2020</span> |

</div> | </div> | ||

<div id="ref-shrawankar2013" class="csl-entry"> | <div id="ref-shrawankar2013" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[ | + | <span class="csl-left-margin">[8] </span><span class="csl-right-inline">Urmila Shrawankar and V M Thakare. <span> Techniques for feature extraction in speech recognition system: A comparative study. </span>2013</span> |

</div> | </div> | ||

<div id="ref-vygon2021" class="csl-entry"> | <div id="ref-vygon2021" class="csl-entry"> | ||

| − | <span class="csl-left-margin">[ | + | <span class="csl-left-margin">[9] </span><span class="csl-right-inline">Roman Vygon and Nikolay Mikhaylovskiy. <span>Learning efficient representations for keyword spotting with triplet loss. </span>2021</span> |

</div> | </div> | ||

</div> | </div> | ||

Latest revision as of 11:00, 14 November 2022

Contents

Overview

Status: Completed

- Type: Semester Thesis

- Professor: Prof. Dr. L. Benini

- Supervisors:

- Cristian Cioflan (IIS): cioflanc@iis.ee.ethz.ch

- Dr. Lukas Cavigelli (Huawei Technologies): lukas.cavigelli@huawei.com

Short Description

The objective of keyword spotting (KWS) is to detect a set of predefined keywords within a stream of user utterances. For most of KWS pipelines, as well as any other audio-based task, the acoustic model and/or lingustic model is preceded by a feature extraction segment. Several approaches have been proposed to perform this step, the most notable one being the Mel Frequency Cepstral Coefficients (MFCC). As this technique represents only one of the possible approaches towards feature extraction, and since it has been showed that the performance of the system can be largely influenced by this choice, the aim of this project is to do an in-depth evaluation of feature extraction methods for KWS.

Introduction

Feature extraction represents the process of deriving essential, non-redundant information from a set of measured data. Through the selection and/or combination of input variables into features, the dimension of the given data can be largely reduced, thus also reducing the computational effort within the processing pipeline. Nonetheless, such techniques should not affect the integrity of the data; the extracted features should still accurately described the data set. Often used interchangeably, we differentiate between feature (pre)processing and feature extraction, defining the former as the techniques applied to alter the data in order to emphasize or remove certain characteristics. To better understand the concept of feature extraction, we will use the Mel-Frequency Cepstral Coefficients (MFCC) as an example.

Extracted from an audio signal received as input by the system, the MFCCs are one of the current standards in KWS [2] [6]. They are a cepstral representation of the signal, but, compared to the simple cepstrum, the MFCC use equally spaced frequency bands, based on the Mel scale. This leads to a closer representation of the signal to the actual response of the human auditory system. The derivation techniques are described in detail in [5]; we will enumerate the main steps of PyTorch’s MFCC computation:

Windowing - applying a window function (e.g., Hamming window) to each frame, mainly to counteract the infinite-data assumption made during the FFT computation;

Fast Fourier Transform (FFT) - calculating the frequency spectrum;

Triangular Filters - applying triangular filters on a Mel-scale to extract frequency bands;

Logarithm - transforming the MelSpectrogram into LogMelSpectrogram to obtain additive behaviour;

Discrete Cosine Transform (DCT) - calculating the Cepstral coefficients from the LogMel-Spectrogram.

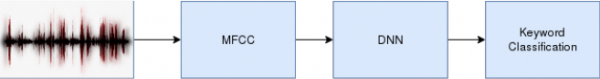

Feature extraction is often used in Machine Learning settings, and especially in the con-text of Deep Neural Networks (DNN), due to the fact that reducing the feature dimensionality further reduces the computational load, as well as the storage requirements, of such a DNN. Therefore, by pre-determining the relevant features from the input data using human expertise, the model is alleviated from the tedious task of identifying and filtering redundant information. A deep learning pipeline for KWS integrating MFCC is presented in Figure 1.

In the recent years, it was shown that state-of-the-art results can be achieved not only by using the MFCCs, but also by employing the results of an intermediate step, such as the MelSpectrogram [9] or the LogMelSpectrogram [4]. Moreover, surveys [1] [7] [3] [8] on the topic present alternative strategies in performing feature extraction for audio-based ML tasks. Nevertheless, there are no such works, to the best of our knowledge, presenting a meta-analysis of the different extraction techniques and their impact in the scope of KWS. As Choi et al. [3] have shown, the choice of the feature extraction mechanism has a significant impact in the context of music tagging, so it is only natural to assume that a similar conclusion would be drawn when performing a comparative evaluation for a keyword spotting system.

Character

- 20% literature research

- 40% feature extraction implementation

- 40% evaluation

Prerequisites

- Must be familiar with Python.

- Knowledge of deep learning basics, including some deep learning framework like PyTorch or TensorFlow from a course, project, or self-taught with some tutorials.

Project Goals

The main tasks of this project are:

Task 1: Familiarize yourself with the project specifics (1-2 Weeks)

Read up on feature extraction methods mentioned in the reference materials. Learn about DNN training and PyTorch, how to visualize results with TensorBoard. Read up on DNN models aimed at time series (e.g. TCNs, RNNs, transformer networks) and the recent advances in KWS.

Task 2: Implement and evaluate the baseline (2-3 Weeks)

Select a dataset and analyse the models which can represent baselines for our work. Particularly check for publicly available code. The supervisors will provide you with the MFCC implementation that will represent the starting point for the aforementioned analysis.

If no code is available: design, implement, and train KWS models, considering the state-of-the-art architectures for time series.

Compare the model against the selected baseline and figures in the paper.

Task 3: Implement feature extraction techniques (4-5 Weeks)

Using the referenced work, implement the feature extraction methods against which MFCC will be compared.

Optimize said methods with respect to computational effort and storage requirements.

(Optional) Perform parameter tuning for the implemented techniques.

Task 4: Evaluate feature extraction techniques (4-5 Weeks)

Evaluate and analyse the accuracy of the KWS pipeline with respect to the implemented feature extraction setting.

Evaluate and analyse the accuracy of the aforementioned methods under similar operating regimes.

Evaluate and analyse the compatibility between the processing techniques and the DNN architecture.

Task 5 - Gather and Present Final Results (2-3 Weeks)

Gather final results.

Prepare presentation (10 min. + 5 min. discussion).

Write a final report. Include all major decisions taken during the design process and argue your choice. Include everything that deviates from the very standard case - show off everything that took time to figure out and all your ideas that have influenced the project.

Project Organization

Weekly Meetings

The student shall meet with the advisor(s) every week in order to discuss any issues/problems that may have persisted during the previous week and with a suggestion of next steps. These meetings are meant to provide a guaranteed time slot for mutual exchange of information on how to proceed, clear out any questions from either side and to ensure the student’s progress.

Report

Documentation is an important and often overlooked aspect of engineering. One final report has to be completed within this project. Any form of word processing software is allowed for writing the reports, nevertheless the use of LaTeX with Tgif (See: http://bourbon.usc.edu:8001/tgif/index.html and http://www.dz.ee.ethz.ch/en/information/how-to/drawing-schematics.html) or any other vector drawing software (for block diagrams) is strongly encouraged by the IIS staff.

Final Report

A digital copy of the report, the presentation, the developed software, build script/project files, drawings/illustrations, acquired data, etc. needs to be handed in at the end of the project. Note that this task description is part of your report and has to be attached to your final report.

Presentation

At the end of the project, the outcome of the thesis will be presented in a 15-minutes talk and 5 minutes of discussion in front of interested people of the Integrated Systems Laboratory. The presentation is open to the public, so you are welcome to invite interested friends. The exact date will be determined towards the end of the work.

References

[1] Sabur Ajibola Alim and Nahrul Khair Alang Rashid. Some commonly used speech feature extraction algorithms. 2018.

[2] Axel Berg, Mark O’Connor, and Miguel Tairum Cruz. Keyword transformer: A self-attention model for keyword spotting. 2021.

[3] Keunwoo Choi, György Fazekas, Kyunghyun Cho, and Mark Sandler. A comparison of audio signal preprocessing methods for deep neural networks on music tagging. 2021.

[4] Byeonggeun Kim, Simyung Chang, Jinkyu Lee, and Dooyong Sung. Broadcasted residual learning for efficient keyword spotting. 2021.

[5] James Lyon. “Mel Frequency Cepstral Coefficient (MFCC) tutorial.

[6] S. Majumdar and B. Ginsburg. MatchboxNet: 1D Time-Channel Separable Convolutional Neural Network Architecture for Speech Commands Recognition. in Proc. Interspeech 2020, 2020, pp. 3356–3360.

[7] Garima Sharma, Kartikeyan Umapathy, and Sridhar Krishnan. Trends in audio signal feature extraction methods. 2020

[8] Urmila Shrawankar and V M Thakare. Techniques for feature extraction in speech recognition system: A comparative study. 2013

[9] Roman Vygon and Nikolay Mikhaylovskiy. Learning efficient representations for keyword spotting with triplet loss. 2021