Difference between revisions of "Flexfloat DL Training Framework"

From iis-projects

m |

|||

| (12 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

[[File:Manticore concept.png|thumb]] | [[File:Manticore concept.png|thumb]] | ||

| + | |||

==Project Overview== | ==Project Overview== | ||

| − | |||

| − | + | The Snitch ecosystem [1] targets energy-efficient high-performance systems, like the Manticore concept [2] including 4096 snitch cores. We plan to tape out a slightly smaller version of Manticore, called Occamy, a two-chiplet system in the near future. Snitch-based architectures are built around the minimal RISC-V Snitch integer core, only about 15 thousand gates in size, which is tightly coupled to accelerators such as an FPU or a DMA engine. | |

| − | + | Recently, industry and academia have started exploring the required computational precision for training. Many state-of-the-art training hardware platforms support by now not only 64-bit and 32-bit floating-point formats, but also 16-bit floating-point formats (binary16 by IEEE and brainfloat). Recent work proposes various training formats such as 8-bit floats [3,4,5]. | |

| + | Most available DL frameworks allow to train networks with 64-bit, 32-bit or 16-bit FP formats. However, the FPU of our Occamy project supports two different types of 16-bit FP formats and two different types of 8-bit FP formats. Therefore, we would like to extend an available DL training framework (e.g., Pytorch) with a library (e.g., flexfloat [5]) capable of emulating various FP formats. Depending on your skills and the project type (SA or MA) this work can be extended by training various networks for various FP formats. | ||

| − | ===Status: | + | |

| − | + | ===Literature=== | |

| − | + | * [https://github.com/pulp-platform/snitch] Snitch Github | |

| + | * [https://ieeexplore.ieee.org/abstract/document/9296802] Manticore | ||

| + | * [https://openreview.net/forum?id=HkxIKNSeIH] Hybrid 8-bit Floating Point (HFP8) Training and Inference for Deep Neural Networks | ||

| + | * [https://proceedings.neurips.cc/paper/2018/file/335d3d1cd7ef05ec77714a215134914c-Paper.pdf] Training deep neural networks with 8-bit floating point numbers | ||

| + | * [https://arxiv.org/abs/1905.12334] Mixed precision training with 8-bit floating point | ||

| + | * [https://github.com/oprecomp/flexfloat] Flexfloat Github | ||

| + | |||

| + | |||

| + | ===Status: Completed === | ||

| + | * Looking for 1 Semester or 1 Master student | ||

| + | * Contact: [[:User:Paulin | Gianna Paulin]], [[:User:Fischeti | Tim Fischer]] | ||

===Prerequisites=== | ===Prerequisites=== | ||

| − | + | * Deep Learning | |

| − | + | * Python | |

| − | + | * C | |

<!-- | <!-- | ||

| Line 27: | Line 38: | ||

: Supervision: [[:User:Mluisier | Mathieu Luisier]] | : Supervision: [[:User:Mluisier | Mathieu Luisier]] | ||

---> | ---> | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

===Character=== | ===Character=== | ||

| − | + | * 25% Theory | |

| − | + | * 75% Implementation | |

===Professor=== | ===Professor=== | ||

| − | + | * [http://www.iis.ee.ethz.ch/people/person-detail.html?persid=194234 Luca Benini] | |

| Line 50: | Line 55: | ||

==== Report / Presentation ==== | ==== Report / Presentation ==== | ||

| − | Documentation is an important and often overlooked aspect of engineering. One final report has to be completed within this project. Any form of word processing software is allowed for writing the reports, nevertheless, the use of LaTeX with Tgif, | + | Documentation is an important and often overlooked aspect of engineering. One final report has to be completed within this project. Any form of word processing software is allowed for writing the reports, nevertheless, the use of LaTeX with Tgif, drawio or any other vector drawing software (for block diagrams) is strongly encouraged by the IIS staff. |

====== Final Report ====== | ====== Final Report ====== | ||

| Line 59: | Line 64: | ||

At the end of the project, the outcome of the thesis will be presented in a 15 (SA) or 20-minutes (MA) talk and 5 minutes of discussion in front of interested people of the Integrated Systems Laboratory. The presentation is open to the public, so you are welcome to invite interested friends. The exact date will be determined towards the end of the work. | At the end of the project, the outcome of the thesis will be presented in a 15 (SA) or 20-minutes (MA) talk and 5 minutes of discussion in front of interested people of the Integrated Systems Laboratory. The presentation is open to the public, so you are welcome to invite interested friends. The exact date will be determined towards the end of the work. | ||

| − | |||

| − | |||

| − | |||

| − | |||

[[Category:Deep Learning Acceleration]] | [[Category:Deep Learning Acceleration]] | ||

[[Category:Deep Learning Projects]] | [[Category:Deep Learning Projects]] | ||

| − | [[Category: | + | [[Category:Completed]] |

[[Category:Software]] | [[Category:Software]] | ||

[[Category:Digital]] | [[Category:Digital]] | ||

Latest revision as of 10:13, 2 November 2022

Contents

Project Overview

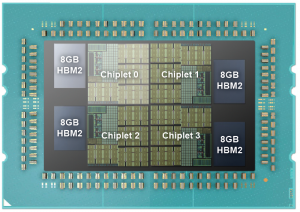

The Snitch ecosystem [1] targets energy-efficient high-performance systems, like the Manticore concept [2] including 4096 snitch cores. We plan to tape out a slightly smaller version of Manticore, called Occamy, a two-chiplet system in the near future. Snitch-based architectures are built around the minimal RISC-V Snitch integer core, only about 15 thousand gates in size, which is tightly coupled to accelerators such as an FPU or a DMA engine.

Recently, industry and academia have started exploring the required computational precision for training. Many state-of-the-art training hardware platforms support by now not only 64-bit and 32-bit floating-point formats, but also 16-bit floating-point formats (binary16 by IEEE and brainfloat). Recent work proposes various training formats such as 8-bit floats [3,4,5].

Most available DL frameworks allow to train networks with 64-bit, 32-bit or 16-bit FP formats. However, the FPU of our Occamy project supports two different types of 16-bit FP formats and two different types of 8-bit FP formats. Therefore, we would like to extend an available DL training framework (e.g., Pytorch) with a library (e.g., flexfloat [5]) capable of emulating various FP formats. Depending on your skills and the project type (SA or MA) this work can be extended by training various networks for various FP formats.

Literature

- [1] Snitch Github

- [2] Manticore

- [3] Hybrid 8-bit Floating Point (HFP8) Training and Inference for Deep Neural Networks

- [4] Training deep neural networks with 8-bit floating point numbers

- [5] Mixed precision training with 8-bit floating point

- [6] Flexfloat Github

Status: Completed

- Looking for 1 Semester or 1 Master student

- Contact: Gianna Paulin, Tim Fischer

Prerequisites

- Deep Learning

- Python

- C

Character

- 25% Theory

- 75% Implementation

Professor

Project Organization

Weekly Meetings

The student shall meet with the advisor(s) every week in order to discuss any issues/problems that may have persisted during the previous week and with a suggestion of next steps. These meetings are meant to provide a guaranteed time slot for mutual exchange of information on how to proceed, clear out any questions from either side and to ensure the student’s progress.

Report / Presentation

Documentation is an important and often overlooked aspect of engineering. One final report has to be completed within this project. Any form of word processing software is allowed for writing the reports, nevertheless, the use of LaTeX with Tgif, drawio or any other vector drawing software (for block diagrams) is strongly encouraged by the IIS staff.

Final Report

A digital copy of the report, the presentation, the developed software, build script/project files, drawings/illustrations, acquired data, etc. needs to be handed in at the end of the project. Note that this task description is part of your report and has to be attached to your final report.

Presentation

At the end of the project, the outcome of the thesis will be presented in a 15 (SA) or 20-minutes (MA) talk and 5 minutes of discussion in front of interested people of the Integrated Systems Laboratory. The presentation is open to the public, so you are welcome to invite interested friends. The exact date will be determined towards the end of the work.↑ top