High-speed Scene Labeling on FPGA

From iis-projects

Contents

Short Description

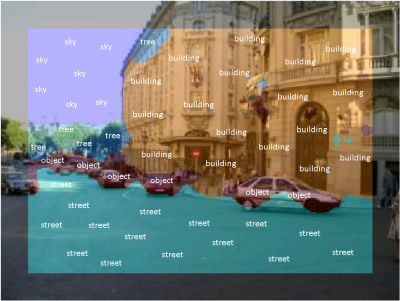

Imaging sensor networks, UAVs, smartphones, driver assistance appliances, and other embedded computer vision systems require power-efficient, low-cost and high-speed implementations of synthetic vision systems capable of recognizing and classifying objects in a scene. Many popular algorithms in this area require the evaluations of multiple layers of filter banks. Almost all state-of-the-art synthetic vision systems are based on features extracted using multi-layer convolutional networks (ConvNets).

To be power efficient and achieve a high throughput at the same time, we would like to create a FPGA implementation of an entire scene labeling network. In order to keep the developed system flexible in terms of the convolutional neural network that is applied as well as the types of layer in the ConvNet, interaction between a flow controlling processor (e.g. an ARM core on a Xilinx Zynq) and the programmable logic is foreseen. If time permits or based on the preference of the student, some focus can also be given towards interfacing directly to a camera and a display or an ethernet adapter. As opposed to an ASIC project, such FPGA and hardware-software codesign work is much more applicable in industry and less constrained in terms of memory and interfaces. If desired by the student, also the use of high-level synthesis tools can be considered.

Status: Completed

- Kevin Luchsinger

- Supervision: Lukas Cavigelli, Francesco Conti

- Date: FS 2016

Prerequisites

- Interest in VLSI and sustem design, and computer vision

- VLSI 1

Character

- 20% Theory / Literature Research

- 80% VLSI Architecture, Implementation & Verification

Professor

Detailed Task Description

Goals

The goals of this project are

- for the student(s) to get to know the FPGA design flow from specification through architecture exploration to implementation, including the useof memory interfaces and other off-chip communication

- to learn how to gradually port software blocks to programmable logic and design an entire hetergeneous system using with software, FPGA fabric and hardwired interfaces.

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues. At the end of the project, you have to present/defend your work during a 15 min. presentation and 5 min. of discussion as part of the IIS colloquium.\

Timeline

To give some idea on how the time can be split up, we provide some possible partitioning:

- Literature survey, building a basic understanding of the problem at hand, catch up on related work

- Development of a working software-based implementation running on the Zynq's ARM core

- Piece-by-piece off-loading of relevant tasks to the programmable logic

- Implementation of data interfaces (software or hardware)

- Report and presentation

Literature

- Hardware Acceleration of Convolutional Networks:

- C. Farabet, B. Martini, B. Corda, P. Akselrod, E. Culurciello and Y. LeCun, "NeuFlow: A Runtime Reconfigurable Dataflow Processor for Vision", Proc. IEEE ECV'11@CVPR'11 [1]

- V. Gokhale, J. Jin, A. Dundar, B. Martini and E. Culurciello, "A 240 G-ops/s Mobile Coprocessor for Deep Neural Networks", Proc. IEEE CVPRW'14 [2]

- [3]

- two not-yet-published papers by our group on acceleration of ConvNets