Improving Scene Labeling with Hyperspectral Data

From iis-projects

Contents

Description

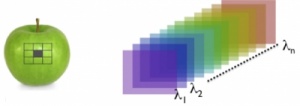

Hyperspectral imaging is different from normal RGB imaging in the sense that it does not capture the amount of light within three spectral bins (red, green, blue), but many more (e.g. 16 or 25) and not necessarily in the visual range of the spectrum. This allows the camera to capture more information than humans can process visually with their eyes and thus gives way to very interesting opportunities to outperform even the best-trained humans; in fact you can see it as a step towards spectroscopic analysis of materials.

Recently, a novel hyperspectral imaging sensor has been presented [video, pdf] and adopted in first industrial computer vision cameras [pdf, link]. These new cameras are only 31 grams w/o the lens as opposed to the old cameras which used complex optics with beam splitters, could not provide a large number of channels and were very heavy, ultra expensive and not mobile [link, link].

We have acquired such a camera and would like to explore its use for image understanding/scene labeling/semantic segmentation (see labeled image). Your task would be to evaluate and integrate this camera into a working scene labeling system [paper] and would be very diverse:

- create a software interface to read the imaging data from the camera

- collect some images to build a dataset for evaluation (fused together with data from a high-res RGB camera)

- adapt the convolutional network we use for scene labeling to profit from the new data (don't worry, we will help you :) )

- create a system from the individual parts (build a case/box mounting the cameras, dev board, WiFi module, ...) and do some programming to make it all work together smoothly and efficiently

- cross your fingers, hoping that we will outperform all the existing approaches to scene labeling in urban areas

Status: Completed

- Dominic Bernath

- Supervision: Lukas Cavigelli

- Date: Autumn Semester 2015

Prerequisites

- Knowledge of C/C++

- Interest in computer vision and system engineering

Character

- 10% Literature Research

- 40% Programming

- 20% Collecting Data

- 30% System Integration

Professor

Detailed Task Description

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues. At the end of the project, you have to present/defend your work during a 15 min. or 25 min. presentation and 5 min. of discussion as part of the IIS colloquium (as required for any semester or master thesis at D-ITET).