NVDLA meets PULP

From iis-projects

Contents

Introduction

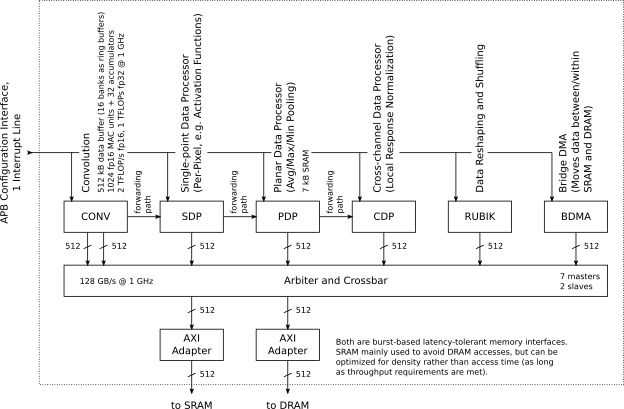

Machine learning is the hot topic of the industry at the moment. Dedicated hardware accelerators for various deep learning tasks are being developed by many different parties for an equally diverse set of applications. IIS and the PULP project is no exception: we have developed multiple accelerators targeted at different forms and stages of deep learning, as well as processors that are computationally capable to perform such tasks. NVIDIA of GPU fame has recently released their take on such an accelerator, called the NVIDIA Deep Learning Accelerator. In this Master Thesis we would like to find out if and how NVDLA can be a companion, competitor, accelerator, or encompassing framework to the PULP project and the accelerators/processors we have developed.

Project description

The purpose of this project is to get the NVIDIA Deep Learning Accelerator up and running, implement it in a modern ASIC technology node, and compare it against other accelerators in the PULP project. More specifically we would like to see as a result of this project how NVDLA

- compares against NTX [Schuiki2018], a streaming floating-point accelerator

- compares against Ara, a vector processor based on the RISC-V Vector extension

- compares against RI5CY cluster/Ariane [Gautschi2017,Zaruba2018], two scalar RISC-V processors

in terms of performance, area, and power consumption. This includes getting familiar with NVDLA, understanding its programming model, and being able to launch computation on it. Since NVDLA is a large unit, we are also interested to see if and how it can be combined with PULP and what scale such a combination would be beneficial. The difference of scales will make it necessary to consider multiple NTX/Ara/RI5CY clusters/Ariane working in tandem in order to attain meaningful comparisons (such as same-compute, same-area, same-power, same-bandwidth settings).

NVDLA is released as Verilog source code, and all PULP-related sources are in SystemVerilog and VHDL. It is essential that you know or are willing to learn your way around an HDL and ASIC implementation tools (see next section). As a first step we are interested in synthesis results only, but depending on the project's progress we can also consider doing place-and-route to get a feeling how NVDLA behaves in the backend.

Required Skills

To work on this project, you will need:

- to have worked in the past with at least one RTL language (SystemVerilog or Verilog or VHDL) -- having followed the VLSI1 / VLSI2 courses is recommended

- to have prior knowedge of hardware design and computer architecture -- having followed the "Advanced System-on-Chip Design" or "Energy-Efficient Parallel Computing Systems for Data Analytics" course is recommended

- to have prior knowledge of basic machine learning, mainly DNNs/CNNs which will be used as sample workloads

Other skills that you might find useful include:

- familiarity with git, the UNIX shell, C programming

- to be strongly motivated for a difficult but super-cool project

Status: Completed

- Semester Project of Davide Menini

- Supervision: Fabian Schuiki, Florian Zaruba

Professor

Practical Details

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues.

At the end of the project, you have to present/defend your work during a 15 min. presentation and 5 min. of discussion as part of the IIS colloquium.

Literature

- [Schuiki2018] Schuiki, Fabian, et al. "A Scalable Near-Memory Architecture for Training Deep Neural Networks on Large In-Memory Datasets." [1]

- [Gautschi2017] Gautschi, Michael, et al. "Near-threshold RISC-V core with DSP extensions for scalable IoT endpoint devices." [2]

- [Zaruba2018] Zaruba, Florian "Ariane: An open-source 64-bit RISC-V Application-Class Processor and latest Improvements" [3]