Online Learning of User Features (1S)

From iis-projects

Contents

Overview

Status: In progress

- Type: Semester Thesis

- Professor: Prof. Dr. L. Benini

- Supervisors:

- Cristian Cioflan (IIS): cioflanc@iis.ee.ethz.ch

- Dr. Lukas Cavigelli (Huawei Technologies): lukas.cavigelli@huawei.com

Short Description

The objective of keyword spotting (KWS) is to detect a set of predefined keywords within a stream of user utterances. Such a task is usually employed in low-memory, low-power devices, thus a KWS module should obtain a high accuracy while also taking into account the memory footprint and the latency of the system. In devices aimed for personal use, adapting the model to the user’s characteristics can considerably improve the performance of the system. We want to explore how the user-specific features can be exploited in order to improve the accuracy of a small-footprint KWS model.

Introduction

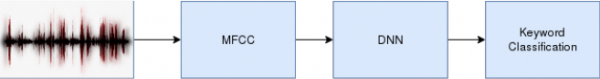

KWS represents a speech-based approach to human-computer interactions. In a personal setting, it is mostly used in order to wake up a virtual assistant, such as Siri or Alexa. Therefore, the set of possible words to be detected (i.e. classify a user utterance as one specific word) is usually small. Taking into account the results reported by Deep Neural Networks (DNN) on classification tasks and given the low ratio of classes to samples on which a system is to be trained, high accuracies can be obtained. Such a system usually consists of two components. Firstly, we use a preprocessing step, in which the raw waveform is converted to a set of meaningful features. In order to obtain the most relevant information from a user utterance, mel-frequency cepstral coefficients proved to offer the best results, their advantage relying on the frequency bands being spaced on a scale which approximates well the human auditory system’s response. The second component is the DNN, whose inputs are the aforementioned features, while the output is a probability of those features belonging to a certain class. A schematic of a KWS system can be seen in Figure 1.

In opposition to an image classification task, in which a single image suffices to correctly classify its content, speech-based classification largely relies on time information, as the pronunciation of a phoneme depends on the previous phonemes, and a word can be understood only by concatenating in an ordered manner its phonemes. For such time series, various DNN architectures have been proposed (e.g. TCNs, RNNs, transformers) [1]–[3]. These approaches obtained high accuracy levels on well-established datasets, such as [4], [5].

Another application in which DNNs proved to be successful is feature embedding. An embedding represents a mapping of a discrete variable to a vector of continuous numbers. Their advantage over other encoding methods (e.g. one-hot encoding) is represented by their low dimensionality, together with the fact that they can be learned in a supervised fashion. Additionally, by projecting the input features in the neural network embeddings’ space, one can perform meaningful comparisons between the features, through the use of distance measures. Historically, this concept has been successfully applied in the context of recommender systems, in which a user is provided with relevant suggestions (e.g. films, books) by comparing his/her interests against clusters formed by other users’ interests. In other words, the engine adapts itself based on the user-specific features.

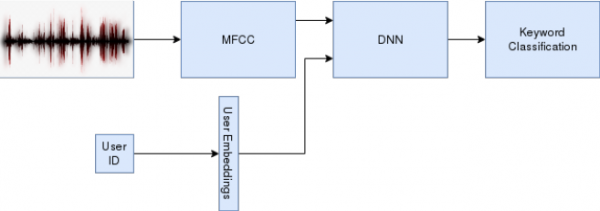

Apart from representing a standalone network, embeddings can be also used as inputs for a DNN, as seen in Figure 2. By doing so, a well-pretrained model can be modified with respect to the user’s characteristics, thus exceeding the performance of a generic model. This can also mean that a certain user can be identified based on its embeddings. Our goal is to devise a KWS system integrating user features, which is trained online using the incoming user-specific characteristics. As such systems are usually deployed on always-on, low-power devices, a greedy feature vector update policy with respect to a certain user should be employed. Additionally, accounting for the use of such systems in wearable devices, the memory footprint, understood as the amount of parameters of the model, should be small.

Character

- 20% literature research

- 70% neural network implementation

- 10% evaluation

Prerequisites

- Must be familiar with Python.

- Knowledge of deep learning basics, including some deep learning framework like PyTorch or TensorFlow from a course, project, or self-taught with some tutorials.

Project Goals

The main tasks of this project are:

Task 1: Familiarize yourself with the project specifics (1-2 Weeks)

Learn about DNN training and PyTorch, how to visualize results with TensorBoard. Read up on DNN models aimed at time series (e.g. TCNs, RNNs, transformer networks) and the recent advances in KWS. Read up on feature embeddings.

Task 2: Devise a DNN with a performance comparable to the state-of-the-art (3-4 Weeks)

Select a dataset and analyse the models which can represent baselines for our work. Particularly check for publicly available code.

If no code is available: design, implement, and train a KWS model, considering a three-party trade-off between accuracy, number of parameters, and latency.

Compare the model against the selected baseline and figures in the paper.

Task 3 - Integrate user features in the designed DNN (6-7 Weeks)

Implement user embeddings, which map the user-ID to the feature vector, the latter representing an additional input of the DNN.

Devise a greedy update policy for the feature vector.

Compare the model’s accuracy against the generic DNN, as well as the variation in parameters and latency.

Evaluate the model on self-generated input data, thus understanding its adaptability.

Repeat this task with other conceivable options to integrate user features.

Task 4 - Model optimization (Optional)

Optimize the model w.r.t. latency (e.g. skip connections, early exits) and/or memory footprint (e.g. pruning, quantization, weight sharing, knowledge distillation techniques).

Integrate federated learning in order to share knowledge among multiple parties without compromising user privacy and data security.

Task 5 - Gather and Present Final Results (2-3 Weeks)

Gather final results.

Prepare presentation (15 min. + 5 min. discussion).

Write a final report. Include all major decisions taken during the design process and argue your choice. Include everything that deviates from the very standard case - show off everything that took time to figure out and all your ideas that have influenced the project.

Project Organization

Weekly Meetings

The student shall meet with the advisor(s) every week in order to discuss any issues/problems that may have persisted during the previous week and with a suggestion of next steps. These meetings are meant to provide a guaranteed time slot for mutual exchange of information on how to proceed, clear out any questions from either side and to ensure the student’s progress.

Report

Documentation is an important and often overlooked aspect of engineering. One final report has to be completed within this project. Any form of word processing software is allowed for writing the reports, nevertheless the use of LaTeX with Tgif (See: http://bourbon.usc.edu:8001/tgif/index.html and http://www.dz.ee.ethz.ch/en/information/how-to/drawing-schematics.html) or any other vector drawing software (for block diagrams) is strongly encouraged by the IIS staff.

Final Report

A digital copy of the report, the presentation, the developed software, build script/project files, drawings/illustrations, acquired data, etc. needs to be handed in at the end of the project. Note that this task description is part of your report and has to be attached to your final report.

Presentation

At the end of the project, the outcome of the thesis will be presented in a 15-minutes talk and 5 minutes of discussion in front of interested people of the Integrated Systems Laboratory. The presentation is open to the public, so you are welcome to invite interested friends. The exact date will be determined towards the end of the work.

References

[1] D. Coimbra de Andrade, S. Leo, M. Loesener Da Silva Viana, and C. Bernkopf, “A neural attention model for speech command recognition,” ArXiv e-prints, Aug. 2018.

[2] S. Majumdar and B. Ginsburg, “MatchboxNet: 1D Time-Channel Separable Convolutional Neural Network Architecture for Speech Commands Recognition,” in Proc. Interspeech 2020, 2020, pp. 3356–3360.

[3] M. Zeng and N. Xiao, “Effective combination of DenseNet and BiLSTM for keyword spotting,” IEEE Access, vol. 7, pp. 10767–10775, 2019.

[4] B. Kim, M. Lee, J. Lee, Y. Kim, and K. Hwang, “Query-by-example on-device keyword spotting,” in 2019 IEEE automatic speech recognition and understanding workshop (ASRU), 2019, pp. 532–538.

[5] P. Warden, “Speech Commands: A Dataset for Limited-Vocabulary Speech Recognition,” ArXiv e-prints, Apr. 2018.