PULPonFPGA: Hardware L2 Cache

From iis-projects

Contents

Short Description

While high-end heterogeneous systems-on-chip (SoCs) are increasingly supporting heterogeneous uniform memory access (hUMA), their low-power counterparts targeting the embedded domain still lack basic features like virtual memory support for accelerators. As opposed to simply passing virtual address pointers, explicit data management involving copies is needed to share data between host processor and accelerators which hampers programmability and performance.

At IIS, we study the integration of programmable many-core accelerators into embedded heterogeneous SoCs. We have developed a mixed hardware/software solution to enable lightweight virtual memory support for many-core accelerators in heterogeneous embedded SoCs [1,2]. Recently, we have switched to a new evaluation platform based on the ARM Juno Development Platform [3]. This system combines a modern ARMv8 multicluster CPU with a Xilinx Virtex-7 XC7V2000T FPGA [4] capable of implementing PULP [5] with 32 to 64 cores. The two subsystems

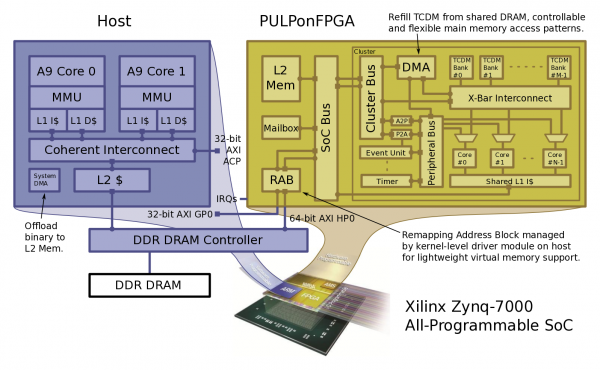

we use an evaluation platform based on the Xilinx Zynq-7000 SoC [1] with PULPonFPGA [2] implemented in the programmable logic to study the integration of programmable many-core accelerators into embedded heterogeneous SoCs.

While our current solution allows the programmer to share virtual address pointers between the host processor and the accelerator in a completely transparent manner, it still requires the programmer to manually orchestrate DMA transfers between the accelerator's low latency tightly-coupled data memory (TCDM), an L1 scratchpad memory, and the shared main memory to optimally exploit the system's memory hierarchy and to achieve high performance.

The idea of this project is to enhance our solution with a software cache similar to [5] that uses part of the TCDM to filter the accelerator's accesses to shared data structures living in main memory, and to spare the programmer from manually setting up the DMA transfers, thereby increasing performance and programmability, respectively. To this end, you will need to:

- ... Study existing software caches such as [5].

- ... Design and implement your own software cache suitable for the heterogeneous platform at hand.

- ... Set up operation on the evaluation platform, and characterize the enhanced solution using heterogeneous benchmark applications.

Status: Available

- Looking for Interested Master Students

- Supervision: Pirmin Vogel, Andrea Marongiu

Character

- 20% Theory, Algorithms and Simulation

- 5% VHDL, FPGA Design

- 60% Accelerator Runtime Development (C)

- 5% Linux Kernel-Level Driver Development

- 10% User-space Runtime and Application Development for Host and Accelerator

Prerequisites

- VLSI I,

- VHDL/System Verilog, C

Professor

References

- Xilinx Zynq-7000 All-Programmable SoC link

- PULP link

- P. Vogel, A. Marongiu, L. Benini, "Lightweight Virtual Memory Support for Many-Core Accelerators in Heterogeneous Embedded SoCs", Proceedings of the 10th International Conference on Hardware/Software Codesign and System Synthesis (CODES+ISSS'15), Amsterdam, The Netherlands, 2015. link

- P. Vogel, A. Marongiu, L. Benini, "Lightweight Virtual Memory Support for Zero-Copy Sharing of Pointer-Rich Data Structures in Heterogeneous Embedded SoCs", to be published, 2016.

- C. Pinto, L. Benini, "A Highly Efficient, Thread-Safe Software Cache Implementation for Tightly-Coupled Multicore Clusters", Proceedings of the 24th International Conference on Application-Specific Systems, Architectures and Processors (ASAP'13), Washington, DC, USA, 2013. link

- D. J. Sorin, M. D. Hill, D. A. Wood, "A Primer on Memory Consistency and Cache Coherence", Morgan & Claypool Publishers, 2011. link