Difference between revisions of "Semi-Custom Digital VLSI for Processing-in-Memory"

From iis-projects

| Line 62: | Line 62: | ||

[[Category:Completed]] | [[Category:Completed]] | ||

| + | [[Category:IIP_PIM]] | ||

[[Category:IIP]] | [[Category:IIP]] | ||

| − | |||

[[Category:2021]] | [[Category:2021]] | ||

Revision as of 14:28, 28 July 2021

Contents

Short Description

Traditional hardware architectures separate memory from processing (logic) elements. Unfortunately, the ever-growing gap between computing performance and memory access times has led these traditional architectures to hit a “memory wall,” where most of the computations’ time, energy, and bandwidth is consumed by memory operations. This problem is further aggravated with the rise of applications, such as machine learning, data mining, or 5G wireless communication, where massive amounts of data need to be processed at high rates.

Processing-in-memory (PIM) is an emerging hardware paradigm that proposes to move the memory elements closer to the computation elements in order to break through the memory wall. The concept of PIM has been largely explored in many recent papers, but most of these works have focused on using some kind of analog computation or emerging semiconductor devices. As such, these architectures are often (i) difficult to design, test, or migrate to other technology nodes, due to their analog component, and/or (ii) not applicable today, due to the use of immature semiconductor technology.

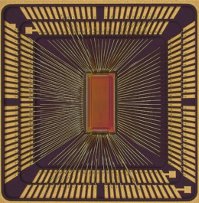

Recently, we have proposed PPAC (Parallel Processor in Associative Content-Addressable Memory) [1], a PIM architecture that is able to accelerate several operations that have a structure similar to a matrix-vector product. Unlike other PIM architectures, PPAC is completely digital and implemented using CMOS standard-cells only, which makes it very easy to design, implement, and test. We have shown that PPAC achieves an energy-efficiency that is competitive to that of PIM designs that use analog computation, and furthermore, that it can achieve better area- and energy-efficiency than traditional digital architectures that perform the same operation. These results demonstrate that we can reap the benefits from PIM even with technology that is commercially available nowadays.

However, our current implementation of PPAC uses generic standard cells that have not been optimized for the operations at hand. The goal of this project is to design custom standard cells that will further improve the area- and energy-efficiency of PPAC. To do so, the student first learns how to design custom standard cells and how to characterize them so that they can be integrated with a standard design flow (synthesis and place-and-route). Then, the student will review the operations supported by PPAC and the standard cells that are chosen by the synthesis tool to implement such operations. The student will explore different optimizations for the standard cells, which include, but are not limited to, the use of different logic styles or the design of larger macro-cells that can be replicated to create a PPAC array. Finally, the student will implement custom standard cells in an advanced technology node, so that we send a re-designed PPAC ASIC to fabrication. This project requires knowledge of digital logic and VLSI design.

[1] O. Castañeda, M. Bobbett, A. Gallyas-Sanhueza, and C. Studer, "PPAC: A Versatile In-Memory Accelerator for Matrix-Vector-Product-Like Operations," IEEE 30th International Conference on Application-specific Systems, Architectures and Processors (ASAP), July 2019

Status: Completed

- Student: Yannick Baumann

- Date: Spring Semester 2021 (bsc21f5)

- Supervision: Oscar Castañeda

Prerequisites

- VLSI I

- VLSI II (recommended)

Character

- 20% Applications

- 30% Standard-cell layout

- 50% VLSI Design

Professor

Detailed Task Description

Practical Details