Smart Virtual Memory Sharing

From iis-projects

Introduction

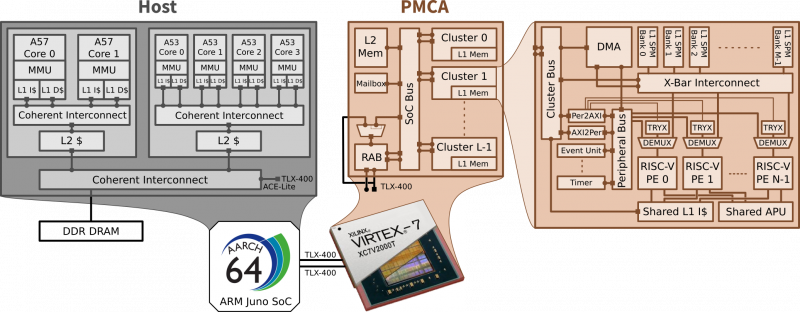

Fueled by the ever-increasing need for better performance per watt, modern embedded systems-on-chip (SoCs) are heavily based on heterogeneous architectures, where a powerful host processor is coupled to massively parallel, programmable many-core accelerators (PMCAs). While such architectures promise tremendous GOPS/watt targets, the burden of effectively using them is still a major challenge to be addressed by the programmers.

The main difficulty in traditional accelerator programming stems from a widely adopted partitioned memory model. The host application creates data buffers in main memory (external DRAM, typically), and manages it transparently through coherent caches and virtual memory. The accelerator features local, private memory, physically addressed. An offload sequence to the accelerator requires explicit data management, which includes i) programming direct memory access (DMA) engines to copy data to/from the accelerator and ii) manually maintaining data consistency with explicit coherency operations such as cache flushes

In an effort to simplify the programmability of heterogeneous systems, initiatives such as the Heterogeneous System Architecture foundation (HSA) are pushing for an architectural model where the host processor and the accelerator(s) communicate via coherent shared memory. The HSA memory architecture moves management of host and accelerator memory coherency from the programmer’s hands down to the hardware. This enables direct and transparent access to system memory from both sides, eliminating the need for explicit management of different memories. In this scenario, an offload sequence simply consists of passing virtual memory pointers to shared data from the host to the accelerator, in the same way that shared memory parallel programs pass pointers between threads running on a CPU.

While heterogeneously shared virtual memory (SVM) is still not a well-established reality, high-end heterogeneous systems are moving their steps towards such an abstraction. In fact, AMD’s A-Series APUs and the 6th Generation Intel Core Processors (with Intel Processor Graphics Gen9) support shared virtual memory according to the OpenCL 2.0 specification. Regarding mobile heterogeneous SoCs, Qualcomm’s next generation, high-end, SVM-supporting GPU Adreno 530 has been released as part of the Snapdragon 820 mobile flagship processor. ARM’s fully cache-coherent GPU called Mimir will probably be announced in late 2016. Needless to say, the hardware support required for heterogeneous SVM is high. While it could be justified in the context of high-end, high-performance GPGPUs, it is probably not affordable for PMCAs targeting low-power embedded SoCs - just like data caches and associated coherency protocols, which are typically replaced by software-managed scratchpad memories (SPMs) for increased scalability and maximum energy efficiency.

Description

For a wide range of offload-based applications, the performance of our mixed hardware/software SVM solution is comparable to an ideal hardware-managed IOMMU. However, when it comes to irregular algorithms with high communication-to-computation ratio (CCR), the increased cost for software-based IOTLB-miss handling can no longer be amortized and the performance of the system deteriorates.

To avoid costly TLB misses, typical MMUs found in today’s CPUs use dedicated hardware for speculative TLB prefetching comparable to the prefetching logic in data caches. Similar techniques have also been proposed for hardware IOMMUs. They typically achieve good performance for applications with regular stride patterns or streaming behavior. On the other hand, such designs must be of low complexity to allow for a hardware implementation in the first place which constrains their flexibility and configurability. As a matter of fact, software prefetching schemes have been shown to be more effective for short streams, irregular access patterns and large numbers of parallel streams. Such techniques rely on the automated or manual insertion of load operations for compiler- or software-based prefetching, respectively. Since these load operations are simply inserted into the actual program code executed by the processor, the amount of prefetching must be carefully tuned to trade off TLB or cache misses vs. additional load operations. Moreover, since both caches and the virtual memory abstraction in today’s processors are completely transparent to the compiler/application developer, there is no possibility for hardware- and software-based prefetching mechanisms to communicate or synchronize. In practice, both symbiotic but also antagonistic interaction between hardware and software prefetching mechanisms can been experienced.

The goal of this project is to study the applicability of existing speculation and prefetching techniques for lightweight, mixed hardware/software solutions for shared virtual memory. As a proof of concept, a new technique optimized to the system architecture at hand will be designed and implemented on the evaluation platform. Finally, an easy and systematic way to exchange information between the runtime/application layer and the underlying virtual memory implementation shall be identified to exploit the SVM solution in an optimal way, thereby improving overall system performance. Ultimately, the design resulting from this project shall lead to a scientific publication, be used to generate results for scientific publications, and/or be a basis for other people implementing further extensions.

Status: Completed

- Master thesis by Andreas Kurth

- Supervision: Pirmin Vogel, Bjoern Forsberg, Andrea Marongiu