Difference between revisions of "Acceleration and Transprecision"

From iis-projects

| (9 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

| − | ===Francesco Conti=== | + | [[File:NVIDIA Tesla V100.jpg|thumb|right|A NVIDIA Tesla V100 GP-GPU. This cutting-edge accelerator provides huge computational power on a [https://arstechnica.com/gadgets/2017/05/nvidia-tesla-v100-gpu-details/ massive 800 mm² die].]] |

| − | * | + | [[File:Google Cloud TPU.png|thumb|right|Google's Cloud TPU (Tensor Processing Unit). This machine learning accelerator can do one thing extremely well: multiply-accumulate operations.]] |

| + | |||

| + | Accelerators are the backbone of big data and scientific computing. While general purpose processor architectures such as Intel's x86 provide good performance across a wide variety of applications, it is only since the advent of general purpose GPUs that many computationally demanding tasks have become feasible. Since these GPUs support a much narrower set of operations, it is easier to optimize the architecture to make them more efficient. Such accelerators are not limited to high performance sector alone. In low power computing, they allow complex tasks such as computer vision or cryptography to be performed under a very tight power budget. Without a dedicated accelerator, these tasks would not be feasible. | ||

| + | |||

| + | ===Who We Are=== | ||

| + | ====Francesco Conti==== | ||

| + | * [mailto:fconti@iis.ee.ethz.ch fconti@iis.ee.ethz.ch] | ||

* ETZ J78 | * ETZ J78 | ||

| − | === | + | ====Luca Bertaccini==== |

| − | + | * [mailto:lbertaccini@iis.ee.ethz.ch lbertaccini@iis.ee.ethz.ch] | |

| − | + | * ETZ J78 | |

| − | |||

| − | |||

| − | * | ||

| − | * ETZ | ||

| + | ====Matteo Perotti==== | ||

| + | * [mailto:mperotti@iis.ee.ethz.ch mperotti@iis.ee.ethz.ch] | ||

| + | * ETZ J85 | ||

===Available Projects=== | ===Available Projects=== | ||

| Line 29: | Line 34: | ||

===Completed Projects=== | ===Completed Projects=== | ||

| + | [[File:Selene.jpg|thumb|right|The Logarithmic Number Unit chip [http://asic.ethz.ch/2014/Selene.html Selene].]] | ||

<DynamicPageList> | <DynamicPageList> | ||

category = Completed | category = Completed | ||

Latest revision as of 17:05, 24 November 2023

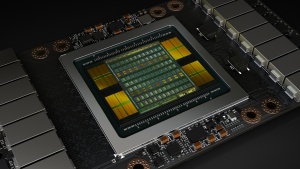

A NVIDIA Tesla V100 GP-GPU. This cutting-edge accelerator provides huge computational power on a massive 800 mm² die.

Accelerators are the backbone of big data and scientific computing. While general purpose processor architectures such as Intel's x86 provide good performance across a wide variety of applications, it is only since the advent of general purpose GPUs that many computationally demanding tasks have become feasible. Since these GPUs support a much narrower set of operations, it is easier to optimize the architecture to make them more efficient. Such accelerators are not limited to high performance sector alone. In low power computing, they allow complex tasks such as computer vision or cryptography to be performed under a very tight power budget. Without a dedicated accelerator, these tasks would not be feasible.

Contents

Who We Are

Francesco Conti

- fconti@iis.ee.ethz.ch

- ETZ J78

Luca Bertaccini

- lbertaccini@iis.ee.ethz.ch

- ETZ J78

Matteo Perotti

- mperotti@iis.ee.ethz.ch

- ETZ J85

Available Projects

- Extending our FPU with Internal High-Precision Accumulation (M)

- Low Precision Ara for ML

- Hardware Exploration of Shared-Exponent MiniFloats (M)

- Approximate Matrix Multiplication based Hardware Accelerator to achieve the next 10x in Energy Efficiency: Full System Intregration

- Extended Verification for Ara

- Ibex: Tightly-Coupled Accelerators and ISA Extensions

- RVfplib

- Scalable Heterogeneous L1 Memory Interconnect for Smart Accelerator Coupling in Ultra-Low Power Multicores

Projects In Progress

- Fault-Tolerant Floating-Point Units (M)

- Virtual Memory Ara

- New RVV 1.0 Vector Instructions for Ara

- Big Data Analytics Benchmarks for Ara

- An all Standard-Cell Based Energy Efficient HW Accelerator for DSP and Deep Learning Applications

Completed Projects

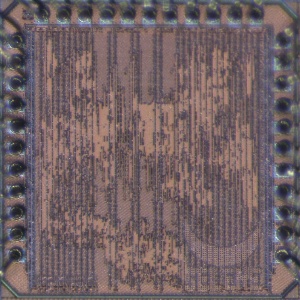

The Logarithmic Number Unit chip Selene.

- Integrating an Open-Source Double-Precision Floating-Point DivSqrt Unit into CVFPU (1S)

- Investigating the Cost of Special-Case Handling in Low-Precision Floating-Point Dot Product Units (1S)

- Optimizing the Pipeline in our Floating Point Architectures (1S)

- Streaming Integer Extensions for Snitch (M/1-2S)

- A Unified Compute Kernel Library for Snitch (1-2S)

- NVDLA meets PULP

- Hardware Accelerators for Lossless Quantized Deep Neural Networks

- Floating-Point Divide & Square Root Unit for Transprecision

- Low-Energy Cluster-Coupled Vector Coprocessor for Special-Purpose PULP Acceleration

- Design and Implementation of an Approximate Floating Point Unit