Difference between revisions of "Human Intranet"

From iis-projects

(→Related Projects) |

|||

| (66 intermediate revisions by 6 users not shown) | |||

| Line 1: | Line 1: | ||

| − | [[ | + | [[File:HI.png|thumb|right|450px]] |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

=What is Human Intranet?= | =What is Human Intranet?= | ||

| − | |||

The world around us is getting a lot smarter quickly: virtually every single component of our daily living environment is being equipped with sensors, actuators, processing, and connection into a network that will soon count billions of nodes and trillions of sensors. These devices only interact with the human through the traditional input and output channels. Hence, they only indirectly communicate with our brain—through our five sense modalities—forming two separate computing systems: biological versus physical. It could be made a lot more effective if a direct high bandwidth link existed between the two systems, allowing them to truly collaborate with each other and to offer opportunities for enhanced functionality that would otherwise be hard to accomplish. The emergence of miniaturized sense, compute and actuate devices as well as interfaces that are form-fitted to the human body opens the door for a symbiotic convergence between biological function and physical computing. | The world around us is getting a lot smarter quickly: virtually every single component of our daily living environment is being equipped with sensors, actuators, processing, and connection into a network that will soon count billions of nodes and trillions of sensors. These devices only interact with the human through the traditional input and output channels. Hence, they only indirectly communicate with our brain—through our five sense modalities—forming two separate computing systems: biological versus physical. It could be made a lot more effective if a direct high bandwidth link existed between the two systems, allowing them to truly collaborate with each other and to offer opportunities for enhanced functionality that would otherwise be hard to accomplish. The emergence of miniaturized sense, compute and actuate devices as well as interfaces that are form-fitted to the human body opens the door for a symbiotic convergence between biological function and physical computing. | ||

Human Intranet is an open, scalable platform that seamlessly integrates an ever-increasing number of sensor, actuation, computation, storage, communication and energy nodes located on, in, or around the human body acting in symbiosis with the functions provided by the body itself. Human Intranet presents a system vision in which, for example, disease would be treated by chronically measuring biosignals deep in the body, or by providing targeted, therapeutic interventions that respond on demand and in situ. To gain a holistic view of a person’s health, these sensors and actuators must communicate and collaborate with each other. Most of such systems prototyped or envisioned today serve to address deficiencies in the human sensory or motor control systems due to birth defects, illnesses, or accidents (e.g., invasive brain-machine interfaces, cochlear implants, artificial retinas, etc.). While all these systems target defects, one can easily imagine that this could lead to many types of enhancement and/or enable direct interaction with the environment: to make us humans smarter! | Human Intranet is an open, scalable platform that seamlessly integrates an ever-increasing number of sensor, actuation, computation, storage, communication and energy nodes located on, in, or around the human body acting in symbiosis with the functions provided by the body itself. Human Intranet presents a system vision in which, for example, disease would be treated by chronically measuring biosignals deep in the body, or by providing targeted, therapeutic interventions that respond on demand and in situ. To gain a holistic view of a person’s health, these sensors and actuators must communicate and collaborate with each other. Most of such systems prototyped or envisioned today serve to address deficiencies in the human sensory or motor control systems due to birth defects, illnesses, or accidents (e.g., invasive brain-machine interfaces, cochlear implants, artificial retinas, etc.). While all these systems target defects, one can easily imagine that this could lead to many types of enhancement and/or enable direct interaction with the environment: to make us humans smarter! | ||

| − | Here, in our projects, we mainly focus on '''sensor, computation, communication, and emerging storage''' aspects to develop very efficient closed-loop sense-interpret-actuate systems, enabling distributed autonomous behavior. For example, to design the ''brain'' of our physical computing (i.e., the compute/interpret component), we rely on computing with ultra-wide words (e.g., 10,000 bits) that eases interfacing with various sensor modalities and actuators. This novel computing paradigm is called hyperdimensional (HD) computing that is inspired from the very size of the biological brain’s circuits: assuming 1 bit per synapse, the brain is made up of more than 24 billion of such ultra-wide words. You can watch some of our demos: | + | Here, in our projects, we mainly focus on '''sensor, computation, communication, and emerging storage''' aspects to develop very efficient closed-loop sense-interpret-actuate systems, enabling distributed autonomous behavior. |

| + | |||

| + | <!--For example, to design the ''brain'' of our physical computing (i.e., the compute/interpret component), we rely on computing with ultra-wide words (e.g., 10,000 bits) that eases interfacing with various sensor modalities and actuators. This novel computing paradigm is called hyperdimensional (HD) computing that is inspired from the very size of the biological brain’s circuits: assuming 1 bit per synapse, the brain is made up of more than 24 billion of such ultra-wide words. You can watch some of our demos: | ||

* [https://bwrc.eecs.berkeley.edu/sites/default/files/files/u2630/flexemg_v2_lq.mp4#t=2 Video1] | * [https://bwrc.eecs.berkeley.edu/sites/default/files/files/u2630/flexemg_v2_lq.mp4#t=2 Video1] | ||

* [https://www.youtube.com/watch?time_continue=9&v=vTQGMQ6QaJE Video2] | * [https://www.youtube.com/watch?time_continue=9&v=vTQGMQ6QaJE Video2] | ||

| Line 21: | Line 15: | ||

You can also find a collection of complemented projects with source codes/datasets here: | You can also find a collection of complemented projects with source codes/datasets here: | ||

* [https://github.com/HyperdimensionalComputing/collection Github link] | * [https://github.com/HyperdimensionalComputing/collection Github link] | ||

| − | + | --> | |

==Prerequisites and Focus== | ==Prerequisites and Focus== | ||

| − | If you are an M.S. student at the ETHZ, typically there is no prerequisite. You can come and talk to us and we adapt the projects based on your skills. The scope and focus of projects are wide. You can choose to work on: | + | If you are an B.S. or M.S. student at the ETHZ, typically there is no prerequisite. You can come and talk to us and we adapt the projects based on your skills. The scope and focus of projects are wide. You can choose to work on: |

| − | * '''Efficient hardware architectures in emerging technologies''' (e.g., [https://www.zurich.ibm.com/sto/memory/ the IBM computational memory]) | + | <!-- * '''Efficient hardware architectures in emerging technologies''' (e.g., [https://www.zurich.ibm.com/sto/memory/ the IBM computational memory])--> |

| − | * '''System-level design and testing''' | + | * '''Exploring new Human Intranet/IoT applications''' |

| + | * '''Algorithm design and optimizations''' (Python) | ||

| + | * '''System-level design and testing''' (Altium, C-programming) | ||

* '''Sensory interfaces''' (analog and digital) | * '''Sensory interfaces''' (analog and digital) | ||

| − | |||

| − | |||

| − | |||

| Line 40: | Line 33: | ||

===Useful Reading=== | ===Useful Reading=== | ||

*[https://ieeexplore.ieee.org/document/7030200/ The Human Intranet--Where Swarms and Humans Meet] | *[https://ieeexplore.ieee.org/document/7030200/ The Human Intranet--Where Swarms and Humans Meet] | ||

| − | + | *[https://ieeexplore.ieee.org/abstract/document/8490896 Efficient Biosignal Processing Using Hyperdimensional Computing: Network Templates for Combined Learning and Classification of ExG Signals] | |

| − | *[https://ieeexplore.ieee.org/document/ | + | *[https://iopscience.iop.org/article/10.1088/1741-2552/aab2f2/meta A review of classification algorithms for EEG-based brain–computer interfaces: a 10 year update] |

| − | *[https:// | ||

| − | |||

| − | |||

=Available Projects= | =Available Projects= | ||

Here, we provide a short description of the related projects for you to see the scope of our work. The directions and details of the projects can be adapted based on your interests and skills. Please do not hesitate to come and talk to us for more details. | Here, we provide a short description of the related projects for you to see the scope of our work. The directions and details of the projects can be adapted based on your interests and skills. Please do not hesitate to come and talk to us for more details. | ||

| − | = | + | ==Wearables for health and physiology== |

| + | [[File:Cardiorespiratory.JPG|thumb|right|200px]] | ||

| + | ===Short Description=== | ||

| + | In this research area, we develop wearable systems, algorithms, and applications for monitoring health- and physiological-related parameters in innovative ways. Examples include (but are not limited to): heart rate and respiration rate monitoring, blood pressure monitoring, bladder monitoring, drowsiness detection, monitoring of muscle contractions and identification of innervations, ... | ||

| + | |||

| + | For wearables based on ultrasound, see also the dedicated [[Digital_Medical_Ultrasound_Imaging | '''Ultrasound section''']] | ||

| + | |||

| + | ===Available Projects=== | ||

| + | <DynamicPageList> | ||

| + | category = Available | ||

| + | category = Digital | ||

| + | category = WearablesHealth | ||

| + | </DynamicPageList> | ||

| + | |||

| + | ==Brain-Machine Interfaces and wearables== | ||

<!-- [[File:BCI.png|thumb|center]] | <!-- [[File:BCI.png|thumb|center]] | ||

[[File:BCI-dryEEG.jpg|thumb|right]] --> | [[File:BCI-dryEEG.jpg|thumb|right]] --> | ||

[[File:Emotiv-epoc-14-channel-mobile-eeg.jpg|thumb|right|200px]] | [[File:Emotiv-epoc-14-channel-mobile-eeg.jpg|thumb|right|200px]] | ||

| + | [[File:In_ear_EEG.jpg|thumb|right|200px]] | ||

===Short Description=== | ===Short Description=== | ||

| − | Noninvasive | + | Noninvasive brain–machine interfaces (BMIs) and neuroprostheses aim to provide a communication and control channel based on the recognition of the subject’s intentions from spatiotemporal neural activity typically recorded by EEG electrodes. BMIs are a special kind of HMI, focused on the brain. What makes BMIs particularly challenging is their susceptibility to errors over time in the recognition of human intentions. |

| + | |||

| + | In these projects, our goal is to develop efficient and fast learning algorithms that replace traditional signal processing and classification methods by directly operating with raw data from electrodes. Furthermore, we aim to efficiently deploy those algorithms on tightly resource-limited devices (e.g., Microcontroller units) for near sensor classification using artificial intelligence. | ||

| − | + | *WATCH OUR DEMO: EEG-HEADBAND CONTROLLING A DRONE: https://www.youtube.com/watch?v=3-DysFptdRI | |

===Links=== | ===Links=== | ||

| + | * [https://iis-people.ee.ethz.ch/~herschmi/EdgeDL20.pdf Q-EEGNet: an Energy-Efficient 8-bit Quantized Parallel EEGNet Implementation for Edge Motor-Imagery Brain–Machine Interfaces] | ||

| + | * [https://iis-people.ee.ethz.ch/~herschmi/MEMEA20.pdf An Accurate EEGNet-based Motor-Imagery Brain–Computer Interface for Low-Power Edge Computing] | ||

* [https://iis-people.ee.ethz.ch/~arahimi/papers/EUSIPCO18.pdf Fast and Accurate Multiclass Inference for Motor Imagery BCIs Using Large Multiscale Temporal and Spectral Features] | * [https://iis-people.ee.ethz.ch/~arahimi/papers/EUSIPCO18.pdf Fast and Accurate Multiclass Inference for Motor Imagery BCIs Using Large Multiscale Temporal and Spectral Features] | ||

* [https://iis-people.ee.ethz.ch/~arahimi/papers/MONET17.pdf Hyperdimensional Computing for Blind and One-Shot Classification of EEG Error-Related Potentials] | * [https://iis-people.ee.ethz.ch/~arahimi/papers/MONET17.pdf Hyperdimensional Computing for Blind and One-Shot Classification of EEG Error-Related Potentials] | ||

* [https://arxiv.org/abs/1812.05705 Exploring Embedding Methods in Binary Hyperdimensional Computing: A Case Study for Motor-Imagery based Brain-Computer Interfaces] | * [https://arxiv.org/abs/1812.05705 Exploring Embedding Methods in Binary Hyperdimensional Computing: A Case Study for Motor-Imagery based Brain-Computer Interfaces] | ||

| − | + | ===Available Projects=== | |

| − | === | ||

<DynamicPageList> | <DynamicPageList> | ||

category = Available | category = Available | ||

category = Digital | category = Digital | ||

category = BCI | category = BCI | ||

| − | |||

</DynamicPageList> | </DynamicPageList> | ||

| − | =Epilepsy Seizure Detection Device= | + | ==Epilepsy Seizure Detection Device== |

[[File:Non-EEG Seizure.jpg|border|text-top|300px]] | [[File:Non-EEG Seizure.jpg|border|text-top|300px]] | ||

[[File:NeuroPace.jpg|border|text-top|400px]] | [[File:NeuroPace.jpg|border|text-top|400px]] | ||

<!-- Seizure-prediction.png --> | <!-- Seizure-prediction.png --> | ||

===Short Description=== | ===Short Description=== | ||

| − | + | Epilepsy is a brain disease that affects more than 50 million people worldwide. Conventional treatments are primarily pharmacological, but they can require surgery or invasive neurostimulation in the case of drug-resistant subjects. In these cases, personalized patient treatments are necessary and can be achieved with the help of long-term recording of brain activity. In this context, seizure detection systems hold promise for improving the quality of life for patients with epilepsy, providing non-stigmatizing and reliable continuous monitoring during real-life conditions. In this project, our goal is to develop efficient techniques for EEG as well as non-EEG signals to detect an upcoming seizure in an ultra-low-power device. | |

| − | In this project, | + | In this project, our goal is to develop efficient techniques for EEG as well as non-EEG signals to detect an upcoming seizure in an ultra-low-power device. This covers a wide range of analog and digital techniques. |

===Links=== | ===Links=== | ||

| Line 87: | Line 94: | ||

* [https://www.youtube.com/watch?time_continue=87&v=ouyPXkEud40 Controlling tinnitus with neurofeedback] | * [https://www.youtube.com/watch?time_continue=87&v=ouyPXkEud40 Controlling tinnitus with neurofeedback] | ||

| − | === | + | |

| + | |||

| + | ===Available Projects=== | ||

<DynamicPageList> | <DynamicPageList> | ||

category = Available | category = Available | ||

category = Digital | category = Digital | ||

category = Epilepsy | category = Epilepsy | ||

| + | </DynamicPageList> | ||

| + | |||

| + | ==Foundation models and LLMs for Health== | ||

| + | [[File:EEG_ECG.png|border|text-top|400px]] | ||

| + | [[File:LLM.png|border|text-top|400px]] | ||

| + | <!-- Seizure-prediction.png --> | ||

| + | ===Short Description=== | ||

| + | Incorporating Foundation Models and Large Language Models (LLMs) within artificial intelligence is gaining significant traction, particularly due to their potential applications in the health sector. This project is dedicated to developing sophisticated methodologies for utilizing foundation models and LLMs in health-related applications, specifically analyzing electroencephalogram (EEG) brain signals. | ||

| + | |||

| + | In healthcare and biomedical research, implementing advanced computational models, notably Foundation Models and Large Language Models (LLMs), revolutionizes the understanding and interpretation of intricate biosignals. We stand at the vanguard of this revolutionary change, delving into the capabilities of these models for the analysis and interpretation of critical biosignals, including electroencephalograms (EEG) and electrocardiograms (ECG). | ||

| + | |||

| + | Foundation Models, encompassing a spectrum of robust, pre-trained models, are transforming our ability to process and interpret large datasets. Initially trained on extensive and diverse datasets, these models are adaptable for specific tasks, offering remarkable accuracy and efficiency. This adaptability renders them particularly beneficial for biosignal analysis, where the intricacies of EEG and ECG data demand both precision and contextual understanding. | ||

| + | |||

| + | As a subset of Foundation Models, LLMs have demonstrated efficacy in processing and generating human language. At IIS, we are pioneering the application of LLMs in the domain of biosignal interpretation, extending beyond textual data. This entails training the models to interpret the 'language' of biosignals, translating complex patterns into actionable insights. | ||

| + | |||

| + | Our emphasis on EEG and ECG signals is motivated by these biosignals' profound insights into human health. EEGs, capturing brain activity, and ECGs, monitoring heart rhythms, are instrumental in diagnosing and managing various health conditions. By leveraging Foundation Models and LLMs, our objective is to refine diagnostic accuracy, predict health outcomes, and customize patient care. | ||

| + | IIS invites Master's students to immerse themselves in this pioneering area. Our projects offer avenues to engage with state-of-the-art technologies, apply them to real-world health challenges, and contribute to shaping a future where healthcare is more predictive, preventive, and personalized. We encourage your participation in this exhilarating endeavor to redefine the confluence of healthcare and technology. | ||

| + | |||

| + | ===Links=== | ||

| + | * [https://braingpt.org/ BrainGPT] | ||

| + | |||

| + | |||

| + | ===Available Projects=== | ||

| + | <DynamicPageList> | ||

| + | category = Available | ||

| + | category = Digital | ||

| + | category = HealthGPT | ||

</DynamicPageList> | </DynamicPageList> | ||

| + | |||

| + | <!-- | ||

=Extremely Resilient Hyperdimensional Processor= | =Extremely Resilient Hyperdimensional Processor= | ||

[[File:BrainChip.jpg|thumb|left]] | [[File:BrainChip.jpg|thumb|left]] | ||

| Line 110: | Line 148: | ||

=Flexible High-Density Sensors for Hand Gesture Recognition= | =Flexible High-Density Sensors for Hand Gesture Recognition= | ||

| − | + | [[File:Hyperdimensional_EMG.png|thumb|center]] | |

[[File:FlexEMG.png|thumb|right|500px]] | [[File:FlexEMG.png|thumb|right|500px]] | ||

| − | |||

===Short Description=== | ===Short Description=== | ||

| Line 140: | Line 177: | ||

* [https://www.tu-chemnitz.de/etit/proaut/publications/IROS2016_neubert.pdf Learning Vector Symbolic Architectures for Reactive Robot Behaviours] | * [https://www.tu-chemnitz.de/etit/proaut/publications/IROS2016_neubert.pdf Learning Vector Symbolic Architectures for Reactive Robot Behaviours] | ||

* [https://www.aaai.org/ocs/index.php/WS/AAAIW13/paper/download/7075/6578 Learning Behavior Hierarchies via High-Dimensional Sensor Projection (paper)] | * [https://www.aaai.org/ocs/index.php/WS/AAAIW13/paper/download/7075/6578 Learning Behavior Hierarchies via High-Dimensional Sensor Projection (paper)] | ||

| + | ---> | ||

| − | = | + | = Projects in Progress= |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

<DynamicPageList> | <DynamicPageList> | ||

| − | + | supresserrors = true | |

| − | category = | + | category = In progress |

category = Human Intranet | category = Human Intranet | ||

| − | |||

</DynamicPageList> | </DynamicPageList> | ||

| − | |||

=Completed Projects= | =Completed Projects= | ||

| Line 182: | Line 195: | ||

</DynamicPageList> | </DynamicPageList> | ||

| − | =Where to find us= | + | =Where to find us= |

| − | + | {| | |

| − | + | | style="padding: 10px" | [[File:Thorir.jpg|frameless|left|100px]] | |

| − | + | | style="padding: 10px" | [[File:SebiFrey.jpg|frameless|left|100px]] | |

| − | + | | style="padding: 10px" | | |

| − | + | | style="padding: 10px" | [[File:Andrea_Cossettini.jpg|frameless|left|100px]] | |

| − | + | |- | |

| + | | [[:User:Thoriri | Thorir Mar Ingolfsson]] | ||

| + | | Sebastian Frey | ||

| + | | [[:User:Xiaywang | Dr. Xiaying Wang]] | ||

| + | | [[:User:Cosandre | Dr. Andrea Cossettini]] | ||

| + | |- | ||

| + | | '''Office''': OAT U21 | ||

| + | | '''Office''': ETZ J69.2 | ||

| + | | '''Office''': OAT U24 / ETZ J68.2 | ||

| + | | '''Office''': OAT U27 / ETZ J69.2 | ||

| + | |- | ||

| + | | '''e-mail''': [mailto:thoriri@iis.ee.ethz.ch thoriri@iis.ee.ethz.ch] | ||

| + | | '''e-mail''': [mailto:sefrey@iis.ee.ethz.ch sefrey@iis.ee.ethz.ch] | ||

| + | | '''e-mail''': [mailto:xiaywang@iis.ee.ethz.ch xiaywang@iis.ee.ethz.ch] | ||

| + | | '''e-mail''': [mailto:cossettini.andrea@iis.ee.ethz.ch cossettini.andrea@iis.ee.ethz.ch] | ||

| + | |} | ||

| + | |||

| + | |||

| + | |||

| + | [[Category:Digital]] | ||

| + | [[Category:Human Intranet]] | ||

| + | [[Category:ASIC]] | ||

| + | [[Category:FPGA]] | ||

| + | [[Category:Semester Thesis]] | ||

| + | [[Category:Master Thesis]] | ||

Latest revision as of 20:09, 10 March 2024

Contents

What is Human Intranet?

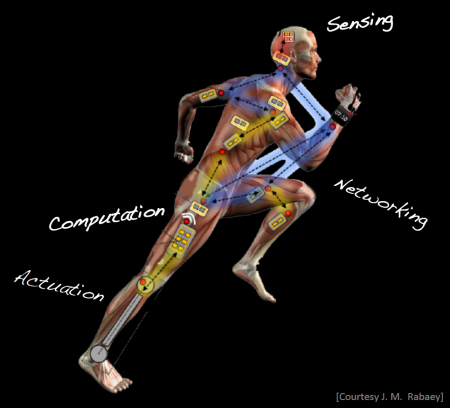

The world around us is getting a lot smarter quickly: virtually every single component of our daily living environment is being equipped with sensors, actuators, processing, and connection into a network that will soon count billions of nodes and trillions of sensors. These devices only interact with the human through the traditional input and output channels. Hence, they only indirectly communicate with our brain—through our five sense modalities—forming two separate computing systems: biological versus physical. It could be made a lot more effective if a direct high bandwidth link existed between the two systems, allowing them to truly collaborate with each other and to offer opportunities for enhanced functionality that would otherwise be hard to accomplish. The emergence of miniaturized sense, compute and actuate devices as well as interfaces that are form-fitted to the human body opens the door for a symbiotic convergence between biological function and physical computing.

Human Intranet is an open, scalable platform that seamlessly integrates an ever-increasing number of sensor, actuation, computation, storage, communication and energy nodes located on, in, or around the human body acting in symbiosis with the functions provided by the body itself. Human Intranet presents a system vision in which, for example, disease would be treated by chronically measuring biosignals deep in the body, or by providing targeted, therapeutic interventions that respond on demand and in situ. To gain a holistic view of a person’s health, these sensors and actuators must communicate and collaborate with each other. Most of such systems prototyped or envisioned today serve to address deficiencies in the human sensory or motor control systems due to birth defects, illnesses, or accidents (e.g., invasive brain-machine interfaces, cochlear implants, artificial retinas, etc.). While all these systems target defects, one can easily imagine that this could lead to many types of enhancement and/or enable direct interaction with the environment: to make us humans smarter!

Here, in our projects, we mainly focus on sensor, computation, communication, and emerging storage aspects to develop very efficient closed-loop sense-interpret-actuate systems, enabling distributed autonomous behavior.

Prerequisites and Focus

If you are an B.S. or M.S. student at the ETHZ, typically there is no prerequisite. You can come and talk to us and we adapt the projects based on your skills. The scope and focus of projects are wide. You can choose to work on:

- Exploring new Human Intranet/IoT applications

- Algorithm design and optimizations (Python)

- System-level design and testing (Altium, C-programming)

- Sensory interfaces (analog and digital)

Useful Reading

- The Human Intranet--Where Swarms and Humans Meet

- Efficient Biosignal Processing Using Hyperdimensional Computing: Network Templates for Combined Learning and Classification of ExG Signals

- A review of classification algorithms for EEG-based brain–computer interfaces: a 10 year update

Available Projects

Here, we provide a short description of the related projects for you to see the scope of our work. The directions and details of the projects can be adapted based on your interests and skills. Please do not hesitate to come and talk to us for more details.

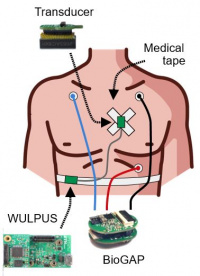

Wearables for health and physiology

Short Description

In this research area, we develop wearable systems, algorithms, and applications for monitoring health- and physiological-related parameters in innovative ways. Examples include (but are not limited to): heart rate and respiration rate monitoring, blood pressure monitoring, bladder monitoring, drowsiness detection, monitoring of muscle contractions and identification of innervations, ...

For wearables based on ultrasound, see also the dedicated Ultrasound section

Available Projects

- EEG-based drowsiness detection

- In-ear EEG signal acquisition

- EEG earbud

- Advanced EEG glasses

- Design of combined Ultrasound and PPG systems

- Wearable Ultrasound for Artery monitoring

- Machine Learning for extracting Muscle features from Ultrasound raw data

Brain-Machine Interfaces and wearables

Short Description

Noninvasive brain–machine interfaces (BMIs) and neuroprostheses aim to provide a communication and control channel based on the recognition of the subject’s intentions from spatiotemporal neural activity typically recorded by EEG electrodes. BMIs are a special kind of HMI, focused on the brain. What makes BMIs particularly challenging is their susceptibility to errors over time in the recognition of human intentions.

In these projects, our goal is to develop efficient and fast learning algorithms that replace traditional signal processing and classification methods by directly operating with raw data from electrodes. Furthermore, we aim to efficiently deploy those algorithms on tightly resource-limited devices (e.g., Microcontroller units) for near sensor classification using artificial intelligence.

- WATCH OUR DEMO: EEG-HEADBAND CONTROLLING A DRONE: https://www.youtube.com/watch?v=3-DysFptdRI

Links

- Q-EEGNet: an Energy-Efficient 8-bit Quantized Parallel EEGNet Implementation for Edge Motor-Imagery Brain–Machine Interfaces

- An Accurate EEGNet-based Motor-Imagery Brain–Computer Interface for Low-Power Edge Computing

- Fast and Accurate Multiclass Inference for Motor Imagery BCIs Using Large Multiscale Temporal and Spectral Features

- Hyperdimensional Computing for Blind and One-Shot Classification of EEG Error-Related Potentials

- Exploring Embedding Methods in Binary Hyperdimensional Computing: A Case Study for Motor-Imagery based Brain-Computer Interfaces

Available Projects

- Exploratory Development of a Unified Foundational Model for Multi Biosignal Analysis

- Deep Learning Based Anomaly Detection in ECG Signals Using Foundation Models

- Pretraining Foundational Models for EEG Signal Analysis Using Open Source Large Scale Datasets

- EEG-based drowsiness detection

- In-ear EEG signal acquisition

- EEG earbud

- Advanced EEG glasses

- Predict eye movement through brain activity

- BCI-controlled Drone

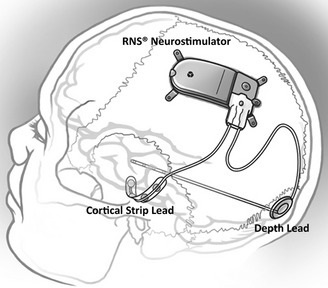

Epilepsy Seizure Detection Device

Short Description

Epilepsy is a brain disease that affects more than 50 million people worldwide. Conventional treatments are primarily pharmacological, but they can require surgery or invasive neurostimulation in the case of drug-resistant subjects. In these cases, personalized patient treatments are necessary and can be achieved with the help of long-term recording of brain activity. In this context, seizure detection systems hold promise for improving the quality of life for patients with epilepsy, providing non-stigmatizing and reliable continuous monitoring during real-life conditions. In this project, our goal is to develop efficient techniques for EEG as well as non-EEG signals to detect an upcoming seizure in an ultra-low-power device. In this project, our goal is to develop efficient techniques for EEG as well as non-EEG signals to detect an upcoming seizure in an ultra-low-power device. This covers a wide range of analog and digital techniques.

Links

- The SWEC-ETHZ iEEG Database and Algorithms

- Epilepsy monitoring and seizure forecasts at Wyss Center

- Controlling tinnitus with neurofeedback

Available Projects

- Advanced EEG glasses

- Self Aware Epilepsy Monitoring

- EEG artifact detection with machine learning

- EEG artifact detection for epilepsy monitoring

Foundation models and LLMs for Health

Short Description

Incorporating Foundation Models and Large Language Models (LLMs) within artificial intelligence is gaining significant traction, particularly due to their potential applications in the health sector. This project is dedicated to developing sophisticated methodologies for utilizing foundation models and LLMs in health-related applications, specifically analyzing electroencephalogram (EEG) brain signals.

In healthcare and biomedical research, implementing advanced computational models, notably Foundation Models and Large Language Models (LLMs), revolutionizes the understanding and interpretation of intricate biosignals. We stand at the vanguard of this revolutionary change, delving into the capabilities of these models for the analysis and interpretation of critical biosignals, including electroencephalograms (EEG) and electrocardiograms (ECG).

Foundation Models, encompassing a spectrum of robust, pre-trained models, are transforming our ability to process and interpret large datasets. Initially trained on extensive and diverse datasets, these models are adaptable for specific tasks, offering remarkable accuracy and efficiency. This adaptability renders them particularly beneficial for biosignal analysis, where the intricacies of EEG and ECG data demand both precision and contextual understanding.

As a subset of Foundation Models, LLMs have demonstrated efficacy in processing and generating human language. At IIS, we are pioneering the application of LLMs in the domain of biosignal interpretation, extending beyond textual data. This entails training the models to interpret the 'language' of biosignals, translating complex patterns into actionable insights.

Our emphasis on EEG and ECG signals is motivated by these biosignals' profound insights into human health. EEGs, capturing brain activity, and ECGs, monitoring heart rhythms, are instrumental in diagnosing and managing various health conditions. By leveraging Foundation Models and LLMs, our objective is to refine diagnostic accuracy, predict health outcomes, and customize patient care.

IIS invites Master's students to immerse themselves in this pioneering area. Our projects offer avenues to engage with state-of-the-art technologies, apply them to real-world health challenges, and contribute to shaping a future where healthcare is more predictive, preventive, and personalized. We encourage your participation in this exhilarating endeavor to redefine the confluence of healthcare and technology.

Links

Available Projects

- Exploratory Development of a Unified Foundational Model for Multi Biosignal Analysis

- Deep Learning Based Anomaly Detection in ECG Signals Using Foundation Models

- Pretraining Foundational Models for EEG Signal Analysis Using Open Source Large Scale Datasets

Projects in Progress

No pages meet these criteria.

Completed Projects

These are projects that were recently completed:

- Ultrasound-EMG combined hand gesture recognition

- Smart e-glasses for concealed recording of EEG signals

- Wireless EEG Acquisition and Processing

- Ultrasound based hand gesture recognition

- Design of combined Ultrasound and Electromyography systems

- Ultra low power wearable ultrasound probe

- Hardware Constrained Neural Architechture Search

- Memory Augmented Neural Networks in Brain-Computer Interfaces

- Low Latency Brain-Machine Interfaces

- Deep Convolutional Autoencoder for iEEG Signals

- TCNs vs. LSTMs for Embedded Platforms

- An Energy Efficient Brain-Computer Interface using Mr.Wolf

- Exploring Algorithms for Early Seizure Detection

- Improving Resiliency of Hyperdimensional Computing

- Toward Superposition of Brain-Computer Interface Models

- FPGA Optimizations of Dense Binary Hyperdimensional Computing

- Fast and Accurate Multiclass Inference for Brain–Computer Interfaces

Where to find us

| Thorir Mar Ingolfsson | Sebastian Frey | Dr. Xiaying Wang | Dr. Andrea Cossettini |

| Office: OAT U21 | Office: ETZ J69.2 | Office: OAT U24 / ETZ J68.2 | Office: OAT U27 / ETZ J69.2 |

| e-mail: thoriri@iis.ee.ethz.ch | e-mail: sefrey@iis.ee.ethz.ch | e-mail: xiaywang@iis.ee.ethz.ch | e-mail: cossettini.andrea@iis.ee.ethz.ch |