Tiny CNNs for Ultra-Efficient Object Detection on PULP

From iis-projects

Contents

Introduction

At the Integrated Systems Laboratory (IIS) we have been working on techniques for smart data analytics in ultra-low power sensors for the past few years along the entire technological stack, from HW (e.g. the PULP system) to SW running on microcontrollers – in many cases using convolutional neural networks (CNNs) as the algorithmic “tool” to extract semantic information out of raw data streams. Doing that, it is possible to greatly reduce the amount of data that needs to be collected in a ULP sensor node and sent to a higher-level computing device (e.g. a smartphone, the cloud). Due to the deeply embedded nature of platforms such as PULP, realistic applications require at the same time a not-excessive computation workload, an as-small-as-possible memory footprint, and accuracy results near to the state-of-the-art. Relatively small CNNs with object detection accuracy results in the order of the best models available have recently been proposed [Iandola2016] that would be a good match for this objective.

Project description

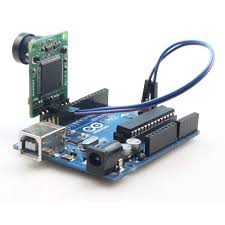

The goal of this project is to port a small but powerful convolutional neural network such as SqueezeNet [Iandola2016] or TinyDarkNet ([[1]]), so that a final demonstrator based on the Fulmine )[[2]]) board and an attached camera is available. The project will consist of several subtasks: 1. Evaluate existing small CNN models in literature, such as SqueezeNet and TinyDarkNet, especially looking at memory footprint constraints. Select the most promising one for implementation on PULP. 2. Implement and test the selected CNN on PULP; you will be able to use an existing CNN library as a starting point, but will most likely need to significantly extend it. 3. Realize a physical demonstrator using the ArduCam )[[3]]) camera module and the Fulmine board to test the developed CNN model online.

The main constraint for the CNN topology definition and training will be to keep the overall size of the model low, so that it can effectively be implemented on a highly memory constrained edge node in a IoT device (such as PULP or a microcontroller unit). To this end, a few techniques for reduced-precision training developed at the IIS will be accessible.

Required Skills

To work on this project, you will need:

- familiarity with embedded C programming and embedded / microcontroller systems

- basic familiarity with a scripting language for deep learning (Python or Lua…) can be useful

- a lot of patience!

If you want to work on this project, but you think that you do not match some the required skills, we can give you some preliminary exercise to help you fill in the gap.

Status: Available

- Supervision: Francesco Conti

Professor

Practical Details

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues.

Around the middle of the project there is a design review, where senior members of the lab review your work (bring all the relevant information, such as prelim. specifications, block diagrams, synthesis reports, testing strategy, ...) to make sure everything is on track and decide whether further support is necessary. They also make the definite decision on whether the chip is actually manufactured (no reason to worry, if the project is on track) and whether more chip area, a different package, ... is provided. For more details confer to [4].

At the end of the project, you have to present/defend your work during a 15 min. presentation and 5 min. of discussion as part of the IIS colloquium.

Literature

- [Iandola16] F. N. Iandola et al., “SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and <0.5MB model size,” [[5]]

Links

- The EDA wiki with lots of information on the ETHZ ASIC design flow (internal only) [6]

- The IIS/DZ coding guidelines [7]