Difference between revisions of "Accurate deep learning inference using computational memory"

From iis-projects

(Created page with "thumb ==Short Description== For decades, conventional computers based on the von Neumann architecture have performed computation by repea...") |

|||

| Line 25: | Line 25: | ||

---> | ---> | ||

===Character=== | ===Character=== | ||

| − | : | + | : 20% Theory |

| − | : 40% | + | : 40% Hardware experiments |

| + | : 40% Programming | ||

===Professor=== | ===Professor=== | ||

| Line 50: | Line 51: | ||

* [https://www.zurich.ibm.com/sto/memory/carbon.html IBM www page on Memory & cognitive technologies] | * [https://www.zurich.ibm.com/sto/memory/carbon.html IBM www page on Memory & cognitive technologies] | ||

* [http://researcher.watson.ibm.com/researcher/view.php?person=zurich-ASE Abu Sebastian Homepage] | * [http://researcher.watson.ibm.com/researcher/view.php?person=zurich-ASE Abu Sebastian Homepage] | ||

| + | * [http://researcher.watson.ibm.com/researcher/view.php?person=zurich-ANU Manuel Le Gallo Homepage] | ||

[[Category:Digital]] | [[Category:Digital]] | ||

[[Category:Available]] | [[Category:Available]] | ||

[[Category:Master Thesis]] | [[Category:Master Thesis]] | ||

| + | |||

| + | |||

[[#top|↑ top]] | [[#top|↑ top]] | ||

Latest revision as of 12:51, 17 April 2020

Contents

Short Description

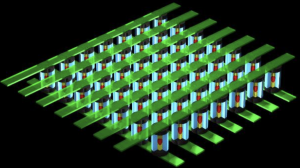

For decades, conventional computers based on the von Neumann architecture have performed computation by repeatedly transferring data between their processing and their memory units, which are physically separated. As computation becomes increasingly data-centric and as the scalability limits in terms of performance and power are being reached, alternative computing paradigms are searched for in which computation and storage are collocated. A fascinating new approach is that of computational memory where the physics of nanoscale memory devices are used to perform certain computational tasks within the memory unit in a non-von Neumann manner.

Computational memory is finding applications in a variety of application areas such as machine learning and signal processing [1]. Most importantly, it is very appealing for making energy-efficient deep learning inference hardware, where the neural network layers would be encoded in crossbar arrays of memory devices [2]. However, there are several challenges that need to be overcome at both hardware and algorithmic levels to realize reliable and accurate inference engines based on computational memory.

We are inviting applications from students to conduct their Master thesis work or an internship project at IBM Research – Zurich on this exciting new topic. The work performed could span low-level hardware experiments on phase-change memory chips comprising more than 1 million devices to high-level algorithmic development in a deep learning framework such as TensorFlow or PyTorch. It also involves interactions with several researchers across IBM research focusing on various aspects of the project. The ideal candidate should have a multi-disciplinary background, strong mathematical aptitude and programming skills. Prior knowledge on emerging memory technologies such as phase-change memory is a bonus but not necessary.

Status: Available

- Looking for 1-2 Master students

- Thesis will be at IBM Zurich in Rüschlikon

- Contact (at ETH Zurich): Frank K. Gurkaynak

- Contact (at IBM): Abu Sebastian:

- Contact (at IBM): Manuel Le Gallo:

Character

- 20% Theory

- 40% Hardware experiments

- 40% Programming

Professor

Detailed Task Description

Goals

Practical Details

Results

Links

- [1] A. Sebastian, M. Le Gallo, R. Khaddam-Aljameh et al. Memory devices and applications for in-memory computing. Nature Nanotechnology (2020). https://doi.org/10.1038/s41565-020-0655-z

- [2] V. Joshi, M. Le Gallo, S. Haefeli et al. Accurate deep learning inference using computational phase- change memory. Nature Communications (2020). Pre-print at https://arxiv.org/abs/1906.03138

- IBM www page on Memory & cognitive technologies

- Abu Sebastian Homepage

- Manuel Le Gallo Homepage↑ top