Difference between revisions of "Energy-Efficient Brain-Inspired Hyperdimensional Computing"

From iis-projects

| Line 1: | Line 1: | ||

[[File:Hyperdimensional EMG.png|thumb]] | [[File:Hyperdimensional EMG.png|thumb]] | ||

==Short Description== | ==Short Description== | ||

| − | The way the brain works suggests suggest that rather than working with numbers that we are used to, computing with hypervectors (high-dimensional vectors, e.g., 10,000 bits) is more efficient. HD computing offers a general and scalable model of computing as well as well-defined set of arithmetic operations that can enable fast and one-shot learning (no need of back- | + | The way the brain works suggests suggest that rather than working with numbers that we are used to, computing with hypervectors (high-dimensional vectors, e.g., 10,000 bits) is more efficient. HD computing offers a general and scalable model of computing as well as well-defined set of arithmetic operations that can enable fast and one-shot learning (no need of back-propagation like in neural networks ). Furthermore it is memory-centric with embarrassingly parallel operations and is extremely robust against most failure mechanisms and noise. There have been successful applications in variety of tasks such as: language recognition, text classification, biosignal processing (EMG/EEG), scene reasoning, analogical-based reasoning, etc. |

Hypervectors are high-dimensional (e.g., 10,000 dimensions), they are (pseudo)random with independent identically distributed components and holographically distributed (i.e., not microcoded). Hypervectors can use various coding: dense or sparse, bipolar or binary and can be combined using arithmetic operations such as multiplication, addition, and permutation. The vectors can be compared for similarity using distance metrics | Hypervectors are high-dimensional (e.g., 10,000 dimensions), they are (pseudo)random with independent identically distributed components and holographically distributed (i.e., not microcoded). Hypervectors can use various coding: dense or sparse, bipolar or binary and can be combined using arithmetic operations such as multiplication, addition, and permutation. The vectors can be compared for similarity using distance metrics | ||

| Line 9: | Line 9: | ||

===Status: Available === | ===Status: Available === | ||

: Looking for 1-2 Semester/Master students | : Looking for 1-2 Semester/Master students | ||

| − | : Contact: [mailto:abbas@ | + | : Contact: [mailto:abbas@iis.ee.ethz.ch Abbas Rahimi] |

===Prerequisites=== | ===Prerequisites=== | ||

Revision as of 15:18, 24 June 2017

Contents

Short Description

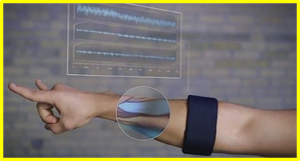

The way the brain works suggests suggest that rather than working with numbers that we are used to, computing with hypervectors (high-dimensional vectors, e.g., 10,000 bits) is more efficient. HD computing offers a general and scalable model of computing as well as well-defined set of arithmetic operations that can enable fast and one-shot learning (no need of back-propagation like in neural networks ). Furthermore it is memory-centric with embarrassingly parallel operations and is extremely robust against most failure mechanisms and noise. There have been successful applications in variety of tasks such as: language recognition, text classification, biosignal processing (EMG/EEG), scene reasoning, analogical-based reasoning, etc.

Hypervectors are high-dimensional (e.g., 10,000 dimensions), they are (pseudo)random with independent identically distributed components and holographically distributed (i.e., not microcoded). Hypervectors can use various coding: dense or sparse, bipolar or binary and can be combined using arithmetic operations such as multiplication, addition, and permutation. The vectors can be compared for similarity using distance metrics

In this project, your goal would be to develop an RTL implementation of HD computing for an EMG-based hand gesture recognition system with fast learning using much lower power than ever before.

Status: Available

- Looking for 1-2 Semester/Master students

- Contact: Abbas Rahimi

Prerequisites

- HDL coding

- VLSI I

Character

- 20% Theory

- 40% Architecture Design

- 40% Verification