Variable Bit Precision Logic for Deep Learning and Artificial Intelligence

From iis-projects

Contents

Introduction

During last decade, the field of Artificial Intelligence and Deep Learning has seen an exponential growth, mostly thanks to result achieved by neural networks architectures. These algorithm, inspired by the working principles of the brain, allowed to achieve superhuman performances in many fields, redefining the state of the art for computer vision, text and speech recognition, problem solving. Convolutional neural networks (CNNs) have become the dominant neural network architecture for solving many state-of-the-art visual processing tasks.

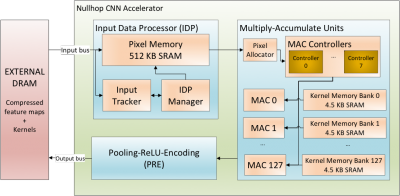

Even though Graphical Processing Units are most often used in training and deploying CNNs, their power consumption becomes a problem for real time mobile applications. The ETH/UZH Institute of Neuroinformatics designed NullHop [Aimar2017], a CNN accelerator architecture which can support the implementation of state of the art CNNs in low-power and low-latency application scenarios. This architecture exploits the sparsity of neuron activations in CNNs to accelerate the computation and reduce memory requirements. This architecture is today characterized by a 16-bit fixed-point precision arithmetic, aligned with the standard precision used in deep learning field. To improve neural networks performances, many research groups are focusing on reducing networks bit precision to 8, 4 or even 1 bit, as targeted by [Andri2017]. As consequence, the design of hardware architectures for accelerating these networks requires new, different ALUs, able to support a wide range of precision.

Project description

This project is a collaboration between the Institute of Neuroinformatics (INI) of ETH/UZH and the Integrated Systems Laboratory of ETH. Neural network computation requires to perform many multiply and accumulate operations (MAC). The project will focus on the design of a configurable fixed-point MAC able to operate with multiple bit precision (16, 8 ,4 ,2, 1 bits, signed and unsigned) with multiple destination registers for the accumulation operation. When working in low precision mode, the block is going to compute multiple multiplication in parallel, ideally splitting the single 16 bits multiplier/adder into two/four/eight/sixteen low precision ones [Conti2017].

The student would study the block implementation first at RTL level and using standard cells, aiming to achieve 500 MHz frequency with GF28 process minimizing area and power consumption as well, keeping in mind that the resulting block will be instantiated multiple times in the final device (> 256).

In the second phase, the student will be free to evaluate a full custom implementation of the chip, creating a standard cell to be used during synthesis.

Key points of the project are:

- Variable precision multipliers and adders

- Variable input format

- Signed/Unsigned operations

- RTL and Synthesis

- Full custom design

As extra, the student will be free to study the principle of neural networks and their implementation in hardware, being free to come up and develop any further idea on the topic. We are also glad to accept any other project proposal coming by the student themselves about neural networks, deep learning and artificial intelligence on both algorithm and hardware.

Required Skills

To work on this project, you will need:

- to have worked in the past with at least one RTL language (SystemVerilog or Verilog or VHDL) - having followed the VLSI1 / VLSI2 courses is recommended

- to have prior knowedge of hardware design and computer architecture - having followed the Advances System-on-Chip Design course is recommended

Other skills that you might find useful include:

- playful spirit: the project gives the opportunity to develop full-custom logic blocks using "exotic" designs (e.g. domino, pass transistor)

If you want to work on this project, but you think that you do not match some the required skills, we can give you some preliminary exercise to help you fill in the gap.

Status: Available

- Supervision: Alessandro Aimar (INI, [1]), Francesco Conti

Professor

Practical Details

Meetings & Presentations

The students and advisor(s) agree on weekly meetings to discuss all relevant decisions and decide on how to proceed. Of course, additional meetings can be organized to address urgent issues.

Around the middle of the project there is a design review, where senior members of the lab review your work (bring all the relevant information, such as prelim. specifications, block diagrams, synthesis reports, testing strategy, ...) to make sure everything is on track and decide whether further support is necessary. They also make the definite decision on whether the chip is actually manufactured (no reason to worry, if the project is on track) and whether more chip area, a different package, ... is provided. For more details confer to [2].

At the end of the project, you have to present/defend your work during a 15 min. presentation and 5 min. of discussion as part of the IIS colloquium.

Literature

- [Aimar2017] A. Aimar et al., NullHop: A Flexible Convolutional Neural Network Accelerator Based on Sparse Representations of Feature Maps [3]

- [Conti2017] F. Conti et al., An IoT Endpoint System-on-Chip for Secure and Energy-Efficient Near-Sensor Analytics, [4]

- [Andri2017] R. Andri et al., YodaNN: an Architecture for Ultra-Low Power Binary-Weight CNN Acceleration, [5]

Links

- The EDA wiki with lots of information on the ETHZ ASIC design flow (internal only) [6]

- The IIS/DZ coding guidelines [7]